A Brief History of Biosignal-Driven Art

From biofeedback to biophysical performance

This article describes the evolution of the field of biosignal-driven art. It gives an account of the various historical periods of activity related to this practice and outlines current practices. It poses the question of whether this is an artistic field or if it is only a collection of disassociated practices with mere technical aspects in common. The aim is to draw commonalities and artistic differences between the works of different practitioners and encourage academic and artistic discussion about the key issues of the field.

Biosignal monitoring in interactive arts, although present for over fifty years, remains a relatively little known field of research within the artistic community as compared to other sensing technologies. Since the early 1960s, an ever-increasing number of artists have collaborated with neuroscientists, physicians and electrical engineers, in order to devise means that allow for the acquisition of the minuscule electrical potentials generated by the human body. This has enabled direct manifestations of human physiology to be incorporated into interactive artworks. However, the evolution of this field has not been a continuous process. It would seem as if there has been little communication amongst practitioners, and historically there have been various sudden periods of activity that seem to have very little relation to past works and also a very limited influence in later works beyond some technical methodologies and general operating metaphors.

This paper presents an introduction to this field of artistic practice that uses human physiology as its main element.

Background

The Human Nervous System

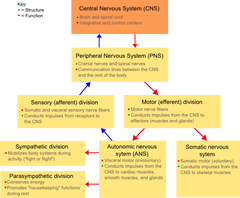

It is possible to think of the human nervous system as a complex network of specialised cells that communicate information about the organism and its surroundings (Maton et al., 1994). In gross anatomy, the nervous system is divided into two sub-systems: the Central Nervous System (CNS) and the Peripheral Nervous System (PNS). The CNS is the largest part of the nervous system. For humans, it includes the brain and the spinal cord. It is responsible for coordinating the activity of all parts of the body. It processes information, is responsible for controlling the activity of the peripheral nervous system, and plays a fundamental role in the control of behaviour.

The PNS extends the CNS by providing a connection to the body’s limbs and organs. The PNS provides a means for sensing the outside world and for manifesting volitional actions upon it. The PNS is further divided into: Autonomic Nervous System (ANS) and Somatic Nervous System (SNS). The SNS is a component of the peripheral system that is concerned with sensing external stimuli from the environment and is responsible for the volitional control of the skeletal muscles that allow us to interact with the outside world (Knapp, Kim and André 2010). The ANS controls the internal sensing of the various elements that form the nervous system. It regulates involuntary responses to internal and external events and is further sub-divided into Sympathetic Nervous Systems (SNS), which are responsible for physiological changes during times of stress, and Parasympathetic Nervous Systems (PNS), which control salivation, lacrimation, urination, digestion and defecation during the resting state. Figure 1 illustrates the taxonomy and organisation of the Central Nervous System.

There are various techniques and methodologies available to monitor the operation of the nervous system. Changes in human physiology manifest themselves in various ways, ranging from changes in physical properties (i.e. dilatation of the pupils) to changes in electrical properties of organs or specialised tissues (i.e. changes in electrical conductivity of the skin).

Biosignals

Biosignal is a generic term that encompasses a wide range of continuous phenomena related to biological organisms. In common practice, the term is used to refer to signals that are bio-electrical in nature, and that manifest as the change in electrical potential across a specialised tissue or organ in a living organism. They are an indicator of the subject’s physiological state. Biosignals are not exclusive to humans, and can be measured in animals and plants. Excitable tissues can be roughly divided into tissues that generate electrical activity, such as nerves, skeletal muscles, cardiac muscle and soft muscles. Passive tissues that also manifest a small difference of potential include the skin and the eyes. Valentinuzzi defines the latter as “non-traditional sources of bioelectricity” (Valentinuzzi 2004, 219).

Biosignal monitoring has had a large tradition in healthcare ever since Italian physician Luigi Galvani discovered “animal electricity” in 1791 (Galvani 1791; Galvani 1841; Piccolino 1998) which was confirmed three years later by Humboldt and Aldini (Aldini 1794; Swartz and Goldensohn 1998). 1[1. For a more detailed definition of biosignals and their use in the fields of medicine, psychology and bioengineering instrumentation, please see Cacioppo, Tassinary, Berntson (2007) and Webster (1997).]

Galvanic Skin Response (GSR)

Galvanic Skin Response (GSR) is the change of the skin’s electrical conductance properties caused by stress and/or changes in emotional states (McCleary 1950). It reflects the activity of sweat glands and the changes in the sympathetic nervous system (Fuller 1977), and is an indicator of overall arousal state. The signal is measured at the palm of the hands or the soles of the feet using two electrodes between which a small, fixed voltage is applied and measured. Changes in the skin’s resistance are caused by activity of the sweat glands; for example, when a subject is presented with a stress-inducing stimulus; his/her skin conductivity will increase as the perspiratory glands secrete more sweat

The GSR signal is easy to measure and reliable. It is one of the main components of the original polygraph or “lie detector” (Marston 1938) and is one of the most common signals used in both psycho-physiological research and the field of affective computing (Picard 1997).

Electrocardiogram (ECG)

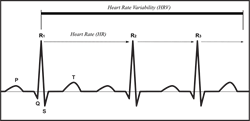

The ECG is a measurement of the electrical activity of the heart as it progresses through the stages of contraction. Figure 2 shows the components of an ideal ECG signal.

In Human Computer Interaction (HCI) systems for non-clinical applications, the Heart Rate (HR) and Heart Rate Variability (HRV) are the most common features measured. For example, low and high HRs can be indicative of physical effort. In affective computing research, if physical activity is constant, a low HRV is commonly correlated to a state of relaxation, whereas an increased HRV is common to states of stress or anxiety (Haag et al., 2004).

Electrooculogram (EOG)

EOG is the measurement of the Corneal-Retinal Potentials (CRP) across the eye using electrodes. In most cases, electrodes are placed in pairs to the sides or above/below the eyes. The EOG is traditionally used in HCI to assess eye-gaze and is normally used for interaction and communication by people that suffer from physical impairments that hinder their motor skills (Patmore and Knapp 1998).

Electromyogram (EMG)

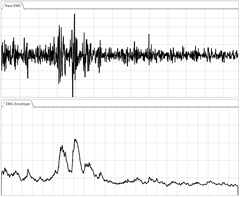

Electromyography is a method for measuring the electrical signal that activates the contraction of muscle tissue. It measures the isometric muscle activity generated by the firing of motor neurons (De Luca and Van Dyk 1975). Motor Unit Action Potentials (MUAPs) are the individual components of the EMG signal that regulate our ability to control the skeletal muscles. Figure 3 illustrates a typical EMG signal and its amplitude envelope.

EMG-based interfaces can recognise motionless gestures (Greenman 2003) across users with different muscle volumes without calibration, measuring only overall muscular tension, regardless of movement or specific coordinated gestures. They are commonly used in the fields of prosthesis control and functional neuromuscular stimulation. For musical applications, EMG-driven interfaces have traditionally been used as continuous controllers, mapping amplitude envelopes to control various musical parameters (Tanaka 1993).

Mechanomyogram (MMG)

MMG is an alternative technology to measure muscle activity. Unlike EMG, MMG is not an electrical signal, but a mechanical one. The contraction of skeletal muscles creates a mechanical change in the shape of the muscle and subsequent oscillations of the muscle fibres at the resonant frequency of the muscles. These vibrations can be audible and are effectively measured by contact microphones and/or accelerometers (Miranda and Wanderley 2006). Due to the characteristics of the signal, the MMG is also known as the acoustic myogram (AMG), phonomyogram (PMG) and viromyogram (VMG).

EMG and MMG signals share many of their characteristics and could be regarded as different techniques to measure the same phenomena. However, there is an important difference that can be explored in artistic contexts. EMG measures muscle fibre action potentials, that is it measures the activation and firing of motor neurons to trigger contraction of the muscle. MMG measures the actual mechanical contraction of the muscle fibres. This means that there is an important decoupling between both signals as repeated actions and muscle fatigue develop during any given activity (Barry, Geiringer and Ball 1985).

Electroencephalogram (EEG)

The Electroencephalogram (EEG) monitors the electrical activity caused by the firing of cortical neurons across the brain’s surface. In 1924, German neurologist Hans Berger measured these electrical signals in the human brain for the first time and provided the first systematic description of what he called the Electroencephalogram (EEG). In his research, Berger noticed spontaneous oscillations in the EEG signals (Rosenboom 1999) and identified rhythmic changes that varied as the subject shifted his or her state of consciousness. These variations, which would later be given the name of alpha waves, were originally known as Berger rhythms (Berger 1929, 355; Gloor 1969; Adrian and Matthews 1934).

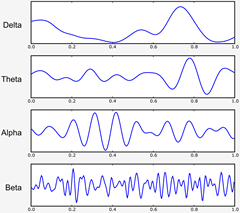

Brainwaves are an extremely complex signal. In surface EEG monitoring, any given electrode picks up waves pertaining to a large number of firing neurons, each with different characteristics indicating different processes in the brain. The resulting large amount of data that represents brain activity creates a difficult job for physicians and researchers attempting to extract meaningful information.

Brainwaves have been categorised into four basic groups or bands of activity related to frequency content in the signals: Alpha, Beta, Theta and Delta (Lusted and Knapp 1996). Figure 4 shows each of the frequency bands as displayed by an EEG monitoring system.

This categorisation however, is the source of certain controversy as some researchers recognise up to six different frequency bands (Miranda et al., 2003). Furthermore, the exact frequency at which each band is divided from the rest is not cast in stone and one might find discrepancies of up to 1 Hz in various texts dealing with the subject. The following categorisation is based on the guidelines provided by the International Federation of Electrophysiology and Clinical Neurophysiology (Steriade et al., 1990):

Delta waves are slow periodic oscillations in the brain that lie within the range of 0.5–4 Hz and appear when the subject is in deep sleep or under the influence of anæsthesia.

Theta waves lie within the range of 4–7 Hz and appear as consciousness slips toward drowsiness. It has been associated with access to unconscious material, creative inspiration and deep meditation.

Alpha rhythm has a frequency range that lies between 8 and 13 Hz. Alpha waves have been thought to indicate both a relaxed awareness and the lack of a specific focus of attention. In holistic terms, it has been often described as a “Zen-like state of relaxation and awareness”.

Beta refers to all brainwave activity above 14 Hz and is further subdivided into 3 categories:

- Slow beta waves (15–20 Hz) are the usual waking rhythms of the brain associated with active thinking, active attention, focus on the outside world or solving concrete problems.

- Medium beta waves (20–30 Hz): this state occurs when the subject is undertaking complex cognitive tasks, such as making logical conclusions, calculations, observations or insights (Rosenboom 1999).

- Fast beta waves (Over 30 Hz): this frequency band is often called Gamma and is defined as a state of hyper-alertness, stress and anxiety (Miranda et al., 2003). It is found when performing a reaction-time motor task (Sheer 1989).

Biosignal-driven Interactive Arts

In 1919, German poet Rainer Maria Rilke wrote an essay entitled Primal Sound, in which he stresses the visual similarity between the surface of the human skull and that of early phonograph wax cylinders. He then speculated about the possibility of transducing the skull’s grooves into this primal sound using a similar technology as it was used for the playback of wax cylinders. In the author’s own words:

The coronal suture of the skull has — let us assume — a certain similarity to the closely wavy line which the needle of a phonograph engraves on the receiving, rotating cylinder of the apparatus. What if one changed the needle and directed it on its return journey along translation of a sound, but existed of itself naturally — well, to put it plainly, along the coronal suture, for example. What would happen? A sound would necessarily result, a series of sounds, music.… Feelings — which? Incredulity, timidity, fear, awe — which of all the feelings here possible prevents me from suggesting a name for the primal sound which would then make its appearance in the world… (Rilke 1978)

Although Rilke never implemented the necessary interface to generate the primal sound, his idea is extremely seductive in its conception and the artistic-æsthetic implications it proposes. Rilke’s text captures the fascination that many artists hold for the possibility of using physiological phenomena to create art. The implication being that something inherently “human-body-like” might result from the direct sonification of bodily features and physiological functions.

Early Pieces and the Biofeedback Paradigm

In the 1960s, a whole generation of artists indeed reappropriated medical tools and developed systems to harness the subtle physiological changes of the human body. These pioneers slowly created a decentralised movement that sought inspiration in medical science to create works that relate to the human being at a physiological level.

In 1964, American composer Alvin Lucier had begun working with physicist Edmond Dewan and became the first composer to make use of biosignals in an artistic context. His piece Music for Solo Performer, scored for “enormously amplified brainwaves”, was premiered at Brandeis University in 1965 (Holmes 2002).

Lucier’s piece explores the rhythmic modulations of the alpha band of brainwaves by means of direct audification and with the addition of percussion instruments — namely cymbals, drums and gongs — which were coupled to large speakers (Teitelbaum 1976). High bursts of alpha activity would cause the speakers to excite the acoustic instruments, which in turn activated a disembodied percussion ensemble.

Music for Solo Performer challenged the very notions of performance and composition. The work is not prescriptive and the composer does not have full control over the end sound result. Similarly, there is no active performance activity, at least not in the way it was understood at the time. The process itself is the music, a feature that is characteristic of Lucier’s musical æsthetic. Regarding the piece Lucier stated:

To release alpha, one has to attain a quasi-meditative state while at the same time monitoring its flow. One has to give up control to get it. In making Music for Solo Performer (1965), I had to learn to give up performing to make the performance happen. By allowing alpha to flow naturally from mind to space without intermediate processing, it was possible to create a music without compositional manipulation or purposeful performance. (Siegmeister et al., 1979)

Lucier’s pioneering use of EEG signals for music composition was quickly adopted by other composers, most notably Richard Teitelbaum and David Rosenboom. Teitelbaum had been working in Rome since the early 1960s as part of the group Musica Elettronica Viva (MEV). In 1967, he presented his work Spacecraft, in which EEG and ECG signals of five performers were used to control various sound and timbre parameters of a Moog synthesiser (Arslan et al., 2005). A year later, Finnish multimedia artist Erkki Kurenniemi attended a music conference organised by the Teatro Comunale in Florence, Italy. During the conference, he was exposed to Mandord Eaton’s ideas of biofeedback as a source for musical practice (Ojanen et al., 2007). Kurenniemi then designed the Dimi-S and Dimi-T. Two electronic musical instruments that measure changes in the electroresistance of the human skin and the production of brainwaves respectively.

During the following years, Teitelbaum explored biosignals further. His 1968 compositions Organ Music and In Tune incorporated the use of the voice and breathing sounds in order to create a close relationship between the resulting music and the human body that generated it (Teitelbaum 1976).

David Rosenboom carried on Teitelbaum’s explorations and, in 1970, presented Ecology of the Skin, a work that measures EEG and ECG signals of performers and audience members (Rosenboom 1999). He was the first artist to undertake systematic research into the potential of brainwaves for artistic applications, creating a large body of works and developing a series of systems that increasingly improved the means of detecting cognitive aspects of musical thinking for real-time music making.

The following year, musique concrète pioneer Pierre Henry began collaborating with scientist Roger Lafosse who was undertaking research into brainwave systems. This collaboration spawned a highly complex and sophisticated live performance system entitled Corticalart (Henry 1971). During the same year, Manford Eaton, who was working at Orcus Research in Kansas City, published Bio-Music (Eaton 1971), a manifesto in which he describes in great detail the apparatus and methods to implement a full biofeedback system for artistic endeavours and calls for a completely new biofeedback-based art in which the intentions of the composer are “fed directly” to the listener by means of careful monitoring and manipulation of the listener’s physiological signals.

Eaton’s system consisted of both audio and visual stimuli for the listener, designed to elicit pre-defined psycho-physiological states which are controlled by the composer. Therefore, his Bio-Music ethos abandons the division between performer and audience. Bio-Music compositions are neither to be “listened to” nor “witnessed” by a large audience, but to be experienced by individual listeners. The composer / performer, adapts his or her algorithms and the presented stimuli to the subject’s individual physiological responses and delivering a consistent “message” or experience for each individual that experiences the work. In Eaton’s Bio-Music, the specific sounds or images presented to the listener are irrelevant as long as they succeed in modulating the subject’s physiological state to that desired by the composer.

Post Biofeedback Practice

Towards the end of the 1980s, the advent of digital signal processing systems and the wide availability of powerful personal computer systems made it possible for researchers to further develop the existing techniques for biosignal analysis in real-time applications. In 1988, California-based scientists Benjamin Knapp and Hugh Lusted introduced the BioMuse system (Knapp and Lusted 1988), which consisted of a signal-capturing unit that sampled eight channels of biosignals, which were then amplified, conditioned and translated to midi messages. The sensors were implemented as simple limb-worn velcro bands that were able to capture EMG, EEG, EOG, ECG and GSR signals. The BioMuse system, facilitated not only the analysis of the signals, but also the ability to use the results of the analysis to control other electronics in a precise and reproducible manner that had not been previously possible (Knapp and Lusted 1990). This allowed them to introduce the concept of biocontrol, an important conceptual shift from the original biofeedback paradigm that had reigned unchallenged during the 1970s. Whilst biofeedback allowed for physiological states to be monitored and, relatively passively, translated to other media by means of sonification, biocontrol proposed the idea and means to create reproducible volitional interaction using physiological data as input (Tanaka 2009).

In order to fully demonstrate the possibilities afforded by their system, Knapp and Lusted commissioned composer Atau Tanaka to write the first piece for their new interface. The BioMuse’s maiden concert took place in Stanford California in 1989. In that concert, Tanaka premiered Kagami, a piece that used EMG signals measuring muscular tension on his forearms (Keislar et al., 1993). This introduced a novel biosignal performance practice that consisted of a highly personal visual and sonic style of biosignal-driven music and stage presence, moving from the archetypal image of the motionless centre-stage-seated bio-performer pioneered by Lucier, to a dynamic musician that explored arm gestures in a highly engaging way.

In 1998, Teresa Marrin-Nakra and Rosalind Picard, who were carrying out research in the field of affective Computing at the Massachusetts Institute of Technology (MIT), created The Conductor’s Jacket, a wearable computing device that facilitated the measuring and recording of physiological and kinematic signals from orchestra conductors (Marrin and Picard 1998). Even though The Conductor’s Jacket was originally conceived as a recording and monitoring device for scientific enquiry, its ability to stream data in real time allowed Nakra to use it in performance contexts, where it functioned not as a passive monitoring device but as a disembodied musical instrument.

Biosignals in the 21st Century

The Early 2000s

The turn of the 21st Century brought with it a renewed worldwide interest in biosignals for artistic applications, as many favourable factors converged. On the one hand, personal computers became powerful enough to deal with these types of signals. Likewise, the evolution of the BioMuse and other biosignal measuring devices created by the affective computing team at MIT meant that it was now possible to ecologically measure physiological signals from performers in stage situations in a transparent and effective way. 2[2. The term “ecological” is often used in the field in similar contexts to refer to measurement techniques that do not impede the natural execution of actions by performers.] Moreover, commercially available medical equipment such as the g.MOBIlab, Emotiv’s EPOC, MindMedia’s Nexus units and Thought Technology’s Infiniti systems have become more affordable and easy to use. This contrasts with the complicated and extremely costly systems that were available for artists in the 1970s. The popularisation of the internet, as well as the establishing of international conferences and symposia that dealt with musical interactive systems, meant that information could be shared by artists and researchers at faster rates than ever before, allowing for a steady incremental development of biosignal interfaces and art works created for these novel devices.

This makes the issue of meaning and content even more relevant than ever. The various technologies that facilitate the measurement of biosignals as well as their correlates to human emotion have undergone a great development, yet the associated approaches and metaphors that artists use to create works using these technologies remain relatively unchanged.

In 2002, Australian artist Tina Gonsalves began to explore the ways in which her artworks could monitor the audience’s emotional states, through “the use of bio-metric sensors as triggers for emotional video narratives, leading to both more immersive installations, as well as intimate ubiquitous works.” (Gonsalves 2009).

Her work explores issues of intimacy, empathy and emotional behaviour in humans through immersive audio-visual installations. According to Gonsalves:

Nothing seems as private as the bodily experience of raw emotion. Emotions are a common thread that every human being can read, understand, and share. Emotions influence all aspect of behaviour and subjective experience; grabbing attention, enhancing or blocking memories and swaying logical thought. Emotions spread in social collectives almost by contagion. In cohesive social interactions, we are highly attuned to subtle and covert emotional signals, Our behaviours often mirror each other in minute detail. At times, we may voluntarily suppress our emotional reactions, temporarily disguising our intentions or vulnerability, though “true” emotions are nevertheless evident in a pattern of internal bodily responses that set an underlying tone for behaviour. (Gonsalves 2009).

In 2004, during the International Conference on Auditory Displays (ICAD), Stephen Barrass organised a practice-led research project entitled Listening to the Mind Listening. The project consisted of an open call for composers to write a piece based on the brain activity of a person listening to a piece of music. The data-set was recorded from the brain activity generated by the chief executive officer of the Brain Resource company, Evian Gordon, as he listened to David Page’s composition Dry Mud (1997). The essential criteria for the pieces submitted were as follows (Barrass 2004):

- Data-driven. Sonification is a mapping of data into sounds for some purpose. The sonification should be the result of an explicit mapping from the data into sounds. The listener should be able to understand relations and structures in the data from the sonification.

- Time is the binding. The timeline of the data must map directly to the timeline of the sonification. All other mapping decisions are completely open but we need to be able to compare pieces across time, and also compare them with the original data set and source piece of music. This means that the final sonification pieces will all be exactly the same duration as the data set, and original piece of music.

- Reproducibility. The mapping of the data into sound must be described in a manner than can be reproduced by others. Mappings should be described explicitly. Different mappings will enable different perceptions of information in the data. The experiment should lay a foundation for scientific and æsthetic observations and ongoing development by the research community.

The concert was followed by a set of reviews carried out by the composers themselves, sonification scientists, brain scientists and members of the attending audience. According to Barrass, the experiment resulted not only in a successful concert which presented pieces that were æsthetically well received by the audience, but in providing a clear auditory display that could be used for scientific purposes, thanks to the systematic sonification approach to composition (Barrass et al., 2006).

During the same year, London-based artist Christian Nold began the Bio Mapping project, a community-led initiative to emotionally map urban environments. According to Nold, throughout the duration of the project, over 1500 people took part in it. The project consisted of equipping participants with a GSR measuring device and a Global Positioning System (GPS) unit. As the participants walk and interact with the urban surroundings, their arousal level is measured by the GSR sensor and linked to the specific location where important variations on the signal occur, thus creating detailed communal emotion maps which display areas within the city that people feel strongly about.

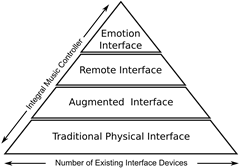

A year later, Ben Knapp and Perry Cook extended the original concept of biocontrol and introduced a conceptual framework which they called the Integral Music Controller (IMC) [Knapp and Cook 2005]. They define four categories of possible musical interfaces based on the type of interaction between performer and the resulting sound as follows:

- Traditional Physical Interface. All acoustic instruments fall into this category. There is a direct physical-acoustic coupling between the performer’s actions and the production of sound.

- Augmented Interface. When one embeds additional sensing modalities to an existing traditional instrument. HyperInstruments fall under this category, the idea being that the interface is augmented to sense events which would not normally produce changes in the instrument’s sound producing or modulating capabilities. For example, a trumpet with pressure sensors on the pistons that can change the sound as more pressure is applied to them, a gesture that does not make any discernable sound difference in a non-augmented trumpet.

- Remote Interface. When there is no direct physical connection between the performance action and the sound being produced. The iconic example for this type of interface is the computer itself, specifically laptop computers which have become pervasive in concert environments.

- Emotion Interface. An interface that allows for motionless emotion-driven interaction between the performer and the resulting sounds. Any of the biofeedback interfaces reviewed so far in this chapter could fit in this category, but only once proper analysis has been carried out to identify and assess emotion.

Based on this categorisation, the IMC can be seen as a framework within which specific musical interfaces (or instruments) that afford all possible interactions can be developed in a transparent way. According to Knapp and Cook (2005), the IMC is defined as a controller that:

- Creates a direct interface between emotion and sound production unencumbered by the physical interface.

- Enables the musician to move between this direct emotional control of sound synthesis and the physical interaction with a traditional acoustic instrument and through all of the possible levels of interaction in between.

Figure 5 shows the IMC pyramid, which illustrates the growing complexity of available interfacing options from simple physico-mechanical interaction to the emotion-driven interaction model. According to Knapp and Cook, the image also illustrates the number of available interfaces for each category which narrows down as we climb up the pyramid. I would add to this, that our understanding as creators of each interactive model follows a similar shape, from the well understood traditional instruments to the complex interaction with biosignal-driven interfaces.

In 2006, the artist duo Terminalbeach collaborated with the Trondheim Sinfonietta to create the Heart Chamber Orchestra (HCO). The HCO project consisted of an audio-visual composition where real-time ECG signals were measured from a group of twelve classically trained musicians. The data generated by the performer’s heart beats was used to control a computer composition and visualisation environment which generated a score in real time for the musicians to play, as well as the generation of electronic sound and visual content. During the performance, the score was constantly changed by the state of the musicians’ heart beats and vice versa, creating a closed feedback loop system. 3[3. See Peter Votava and Erich Berger’s article “The Heart Chamber Orchestra: An audio-visual real-time performance for chamber orchestra based on heartbeats” in this issue of eContact! for a discussion and videos of the project.]

Brazilian composer Eduardo Reck Miranda, a researcher at the Interdisciplinary Centre for Computer Music Research at the University of Plymouth, has carried out important research in the field of EEG monitoring by proposing Brain-Computer Musical Interfaces (BCMI) [Miranda and Brouse 2005a, 2005b; Miranda 2006]. His research focuses on the identification and classification of music-related cognitive processes for the direct control of generative musical algorithms. Miranda has published a series of articles describing the technical and musical implications of BCMIs. Canadian composer Andrew Brouse has collaborated with Miranda, using BCMIs to create meditative musical compositions. Miranda’s research has also fed back into the medical sciences field.

Current State of the Field

The second half of the 2000s has seen an explosion in the use of biosensing technologies in interactive art practice. This has started a process of diversification of practitioners and their original disciplines. Whilst the early works were pioneered by composers linked to the western contemporary or electroacoustic traditions, the turn of the century has seen a large number of artists from very diverse mediums that span from fine arts, media arts, cinema and philosophy.

In 2005, Belgian researcher Benoît Macq directed a project at the eNTERFACE Summer Workshop on Multimodal Interfaces dedicated to the creation of a musical instrument driven by biosignals (Arslan et al., 2005). The project had great success, and for the next four years the core team assembled by Macq continued participating in the eNTERFACE workshops improving their design implementation for biosignal-driven musical instrument. This allowed not only for the team members to work directly in this field but various artists who participated for a single year had the opportunity of approaching the use of these technologies in collaboration with highly skilled technicians as they were developing the instruments. Amongst some of the artists who had these opportunity we have Hanna Drayson and Cumhur Erkut.

A year later, BioMuse creator Benjamin Knapp joined the Sonic Arts Research Centre (SARC) at Queen’s University Belfast. There, he founded the Music, Sensors and Emotion (MuSE) research group. MuSE is a multidisciplinary team focused on both qualitatively and quantitatively measuring the relationship between music and emotion and using this information to inform musical composition and performance. The main areas of interest for the team are:

- Integral music control: Using both gestural and emotional interactions to control digital musical instruments.

- The use of physiological and kinematic ambulatory monitoring to measure gesture and emotion during performance.

- Physiological monitoring of audiences during performance.

- Contagion of emotion and physiological signals between performer and audience.

Within these areas, the MuSE team has produced various biosignal-driven interactive installations (Coghlan et al., 2009; Jaimovich 2010) and high quality scientific research (Jaimovich et al., 2011).

During 2006, my own work with biosignal interfaces started at Queen’s University as one of the founding members of the MuSE team. Following on the tradition of contemporary classical music practice, my work has largely focused in the integration of physiological sensing technologies with traditional acoustic instruments. In a way that compliments the ongoing research within MuSE, my artistic practice does not focus on the assessment of emotional states but on the intrinsic audible characteristics of biosignals themselves as a source for composing materials and as medium of performance. In a way that could be compared to hyperinstruments (Machover and Chung 1989), I have focused on extending not the instruments, but the performer. I.e., taking the physical actions required to perform on a traditional instrument and the physiological states that these actions produce on the performer as the driving element of sound output. A good example of this is my 2010 work S&V for saxophone, violin and heart rate monitor.

During his time as director of MuSE, Knapp went from instrument designer to performer as he founded the Biomuse Trio in 2008 to perform computer chamber music integrating traditional classical performance, laptop processing of sound and the transduction of bio-signals for the control of musical gesture. The work of the ensemble encompasses hardware design, audio signal processing, bio-signal processing, composition, improvisation and gesture choreography. The Biomuse Trio consists of Gascia Ouzounian (violin), Ben Knapp (BioMuse) and Eric Lyon (computer). 4[4. MuSE research and projects and compositions using the BioMuse system are discussed in “The Biomuse Trio in Conversation: An Interview with R. Benjamin Knapp and Eric Lyon” by Gascia Ouzounian, in this issue of eContact!]

In December 2010, new media artist and sonic artist Marco Donnarumma presented Music for Flesh I at the University of Edinburgh, a first public performance using his Xth Sense system. Xth Sense is an MMG-driven interactive system for the biophysical generation and control of music (Donnarumma 2011). Drawing from the growing fields of open source software and hardware, Xth Sense is not only a bespoke system for the sole use of its creator, but a set of tools that can be easily implemented by the community at large and at a very low cost. This marks an important milestone as it opens the doors for a grassroots physiological-driven arts practice outside university walls.

In April 2012, the Sonorities Festival at Queen’s University had a special theme titled The Body’s Music. The festival focused on innovative relationships between music and the body. In particular, explorations of the combination of body and technology, while delving into the sonic world of the body itself. During the parallel Two Thousand + TWELVE symposium, a plenary session was held with the participation of experts Ben Knapp and Atau Tanaka and some of the more active new practitioners Marco Donnarumma, Miguel Ortiz and Gascia Ouzounian. During this open discussion some of the most relevant questions that had previously only been made in conference hallways and late night pub sessions were tackled, namely:

- Is this a field or just a collection of decentralised individual artistic practices?

- Are there any connections or direct progress from the initial intentions of the early pioneers of the 1960s and current practices?

- Are there any intrinsic musical properties in these signals or are they just a bridge between higher cognitive and emotional states and musical outputs?

- Are there any opportunities for collaboration and focused development of biosignal-driven musical practice or are the individual interests of practitioners too focused for any commonalities?

Despite long discussions during the plenary session and throughout the festival, this (and other) questions remain open…

Conclusions

The use of biosignal monitoring technologies in interactive art contexts has been present for over sixty years. From Alvin Lucier’s pioneering work Music for Solo Performer to the current practice of biosignal-driven performance and sound installation, the field has advanced both in its technical implementations and the artistic affordances that the medium provides. Developments in medicine and psychophysiology allow us to understand better the meaning and implication of human-generated electrical signals and their correlation to emotion. The work carried out by the Affective Computing Group at MIT and the Music, Sensors and Emotion team at SARC has facilitated the technical aspects of biosignal monitoring for interactive artistic practice. Furthermore, the technical and social advancements in the wider electronic music field that appeared in the early 2000s has evolved and matured into the establishment of specific interest groups that allow for thorough research and artistic practice in the field.

It is now easier than ever to incorporate physiological measurements onto the stage; thus, biosignal-driven art can now be carried out in a practical way, without the need for the large and expensive equipment used in the early 60s and 70s. This opens the door for deeper artistic and æsthetic explorations, which in our opinion should become the central focus of creative work.

The opportunities seem open ended and it is up to the artistic community interested in harvesting the physiologic mechanisms of the human body to fully realise their potential beyond the mere novelty of the interface.

Bibliography

Adrian, Edgar D. and Bryan H.C. Matthews. “The Berger Rhythm: Potential changes from the occipital lobes in man.” Brain 57/4 (1934), pp. 355–383.

Aldini Giovanni. De animali electricitate dissertationes duae. Typographia Instituti Scientiarum: Bonomiae, 1794.

Arslan, Burak, Andrew Brouse, Julien Castet, Jean-Julien Filatriau, Rémy Lehembre, Quentin Noirhomme and Cédric Simon. “Biologically-driven Musical Instrument.” eNTERFACE 05: Summer Workshop on Multimodal Interfaces (Mons, Belgium, 17 July – 11 August 2005). ISCA, 2005.

Arslan, Burak, Andrew Brouse, Julien Castet, Jean-Julien Filatriau, Rémy Lehembre, Quentin Noirhomme and Cédric Simon. “From Biological Signals to Music.” Enactive05. Proceedings of the 2nd International Conference on Enactive Interfaces (Genoa, Italy, 17–18 November 2005). Available online at http://tcts.fpms.ac.be/publications/papers/2005/enactive05_abbacjfjjlrnqsc.pdf [Last accessed 26 April 2010]

Barrass, Stephen. “Listening to the Mind Listening.” Call for submissions for a Concert of Sonifications at the Sydney Opera House during ICAD 2004. http://www.icad.org/websiteV2.0/Conferences/ICAD2004/concert_call.htm

Barrass, Stephen, Michael Whitelaw and Freya Bailes. “Listening to the Mind Listening: An Analysis of sonification reviews, designs and correspondences.” Leonardo Music Journal 16 (December 2006) “Noises Off: Sound Beyond Music,” pp. 13–19.

Barry, Daniel T., Steven R. Geiringer and Richard D. Ball. “Acoustic Myography: A Noninvasive Monitor of Motor Unit Fatigue.” Muscle Nerve 8/3 (March-April 1985), pp. 189–94.

Berger, Hans. “Über das Elektroenkephalogramm des Menschen. I. Mitteilung.” Archiv für Psychiatrie und Nervenkrankheiten 87 (1929), pp. 527–570.

Cacioppo, John T., Louis G. Tassinary and Gary G. Berntson (eds.). Handbook of Psychophysiology. Cambridge, UK: Cambridge University Press, 2007.

De Luca, Carlo J. and Egbert J. Van Dyk. “Derivation of Some Parameters of Myoelectric Signals Recorded During Sustained Constant Force Isometric Contractions.” Biophysical Journal 15/12 (1975), pp. 1167–1180.

Coghlan, Nial, Javier Jaimovich, R. Benjamin Knapp, Donald O’Brien and Miguel Ortiz. “AffecTech: an Affect-Aware Interactive AV Artwork.” ISEA 2009. Proceedings of the 15th International Symposium of Electronic Arts (Belfast: University of Ulster, 23 August – 1 September 2009).

Donnarumma Marco. “Xth Sense: A Study of muscle sounds for an experimental paradigm of musical performance.” ICMC 2011: “innovation : interaction : imagination”. Proceedings of the International Computer Music Conference (Huddersfield, UK: Centre for Research in New Music (CeReNeM) at the University of Huddersfield, 31 July – 5 August 2011).

Eaton, Manford. Bio-Music: Biological feedback, experiential music system.Kansas City, USA: Orcus, 1971.

Fuller, George D. Biofeedback: Methods and procedures in clinical practice. San Francisco: Biofeedback Press, 1977.

Galvani, Luigi. “De viribus electricitatis in motu musculari commentarius.” Comment Bonon Scient et art Inst Bologna 7 (1791), pp. 363–418.

_____. Opere edite ed inedite del Professore Luigi Galvani raccolte e pubblicate dall’Accademia delle Science dell’Istituto di Bologna. Edited by Silvestro Gherardi. Accademia delle Science dell’Istituto di Bologna: Dall’Olmo, Bologna. 1841.

Gloor, Pierre. Hans Berger on the Electroencephalogram of Man. The Fourteen Original Reports on the Human Electroencephalogram. New York: Elsevier, 1969.

Gonsalves, Tina. “Tina Gonsalves’ Digital Folio.” 2009. http://www.tinagonsalves.com/INTERFrame.html [Last accessed 26 April 2010].

Greenman, Phillip E. Principles of Manual Medicine. Philadelphia, USA: Lippincott Williams & Wilkins, 2003.

Haag, Andreas, Silke Goronzy, Peter Schaich and Jason Williams. “Emotion Recognition Using Bio-Sensors: First Steps Towards an Automatic System.” In Affective Dialogue Systems. Berlin/Heidelberg: Springer Verlag, 2004, pp. 36–48.

Henry, Pierre. Mise en musique du corticalart de Roger Lafosse (1971). Prospective 21e Siècle [6521 022]. 1971.

Holmes, Thomas B. Electronic and Experimental Music: Pioneers in Technology and Composition. New York: Routledge, 2002.

Jaimovich, Javier. “Ground Me!: An Interactive Sound Art Installation.” NIME 2010. Proceedings of the 10th International Conference on New Instruments for Musical Expression (Sydney, Australia: University of Technology Sydney, 15–18 June 2010). pp. 391–394.

Jaimovich, Javier, Nicholas Gillian, Paolo Coletta, Esteban Maestre and Marco Marchini. “Workshop on Multi-Modal Data Acquisition for Musical Research.” NIME 2010. Proceedings of the 10th International Conference on New Instruments for Musical Expression (Sydney, Australia: University of Technology Sydney, 15–18 June 2010). p. 551.

Juslin, Patrik N. and John A. Sloboda. Music and Emotion: Theory and Research. Series in Affective Science Oxford, UK: Oxford University Press, 2001.

Knapp, R. Benjamin, Jonghwa Kim and Elisabeth André. “Physiological Signals and their Use in Augmenting Emotion Recognition for Human-Machine Interaction.” In Emotion-Oriented Systems, Cognitive Technologies. Edited by Roddy Cowie, Catherine Pelachaud and Paolo Petta. Berlin/Heidelberg: Springer Verlag, 2011, pp. 133–159.

Knapp, R. Benjamin and Perry R. Cook. “The Integral Music Controller: Introducing a direct emotional interface to gestural control of sound synthesis.” ICMC 2005: Free Sound. Proceedings of the International Computer Music Conference (Barcelona, Spain: L’Escola Superior de Música de Catalunya, 5–9 September 2005), pp. 4–9.

Knapp, R. Benjamin and Hugh S. Lusted. “A real-time digital signal processing system for bioelectric control of music.” Acoustics, Speech, and Signal Processing, 1988. ICASSP-88. 1988 5, pp. 2556–2557.

Knapp, R. Benjamin and Hugh S. Lusted. “A Bioelectric Controller for Computer Music Applications.” Computer Music Journal 14/1 (Spring 1990) “New Performance Interfaces (1),” pp. 42–47.

Keislar, Doug, Robert Pritchard, Todd Winkler, Heinrich Taube, Mara Helmuth, Jonathan Berger, Jonathan Hallstrom and Brad Garton. “1992 International Computer Music Conference, San Jose, California USA, 14–18 October 1992.” Computer Music Journal 17/2 (Summer 1993) “Synthesis and Transformations,” pp. 85–98.

Kreibig, Sylvia D., Frank H. Wilhelm, Walton T. Roth and James J. Gross. “Cardiovascular, Electrodermal and Respiratory Response Patterns to Fear- and Sadness-Inducing Films.” Psychophysiology 44/5 (September 2007), pp. 787–806.

Lusted, Hugh S., and R. Benjamin Knapp. “Controlling Computers with Neural Signals.” Scientific American275/4 (October 1996), pp. 82–87.

Machover, Tod and Joe Chung. “Hyperinstruments: Musically Intelligent and Interactive Performance and Creativity Systems.” ICMC 1989. Proceedings of the International Computer Music Conference (USA: Ohio State University, 1989). Key: citeulike:794359

Marrin, Teresa and Rosalind W. Picard. “The Conductor’s Jacket: A Device for recording expressive musical gestures.” ICMC 1998. Proceedings of the International Computer Music Conference (Ann Arbor MI, USA: University of Michigan 1998), pp. 215–219.

Marston, William M. Lie Detector Test. New York: R.R. Smith, 1938.

Maton, Anthea, Jean Hopkins, Susan Johnson, David LaHart, Maryanna Q. Warner and Jill D. Wright. Human Biology And Health. Englewood Cliffs NJ: Prentice Hall, 1994.

McCleary, Robert A. “The Nature of the Galvanic Skin Response.” Psychological Bulletin 47 (1950), pp. 97–117.

Miranda, Eduardo Reck. “Brain-Computer Interface for Generative Music.” ICDVRAT 2006. Proceedings of the 6th International Conference on Disability, Virtual Reality and Associated Technologies (Esbjerg, Denmark, 18–20 September 2006), pp. 295–302.

Miranda, Eduardo Reck and Andrew Brouse. “Toward Direct Brain-Computer Musical Interfaces.” NIME 2005. Proceedings of the 5th International Conference on New Instruments for Musical Expression (Vancouver: University of British Columbia, 26–28 May 2005), pp. 216–219.

_____. “Interfacing the Brain Directly with Musical Systems: On Developing systems for making music with brain signals.” Leonardo 38/4 (August 2005), pp. 331– 336, 2005b. doi: 10.1162/0024094054762133. URL http://dx.doi.org/10.1162/ 0024094054762133.

Miranda, Eduardo Reck, Ken Sharman, Kerry Kilborn and Alexander Dunca. “On Harnessing the Electroencephalogram for the Musical Braincap.” Computer Music Journal 27/2 (Summer 2003) “Live Electronics in Recent Music of Luciano Berio,” pp. 80–102.

Miranda, Eduardo Reck and Marcelo Wanderley. New Digital Musical Instruments: Control And Interaction Beyond the Keyboard. Computer Music and Digital Audio Series. Madison WI, USA: A-R Editions, Inc. 2006. http://portal.acm.org/citation.cfm?id=1201683

Nold, Christian (ed.). Emotional Cartography — Technologies of the Self. Softbook published under a Creative Commons Attribution, 2009. http://emotionalcartography.net [Last accessed 26 April 2010].

Ojanen, Mikko, Jari Suominen, Titti Kallio and Kai Lassfolk. “Design Principles and User Interfaces of Erkki Kurenniemi’s Electronic Musical Instruments of the 1960s and 1970s.” NIME 2007. Proceedings of the 7th International Conference on New Instruments for Musical Expression (New York: New York University, 6–10 June 2007), pp. 88–93. DOI=10.1145/1279740.1279756 http://doi.acm.org/10.1145/1279740.1279756

Ortiz, Miguel. “Towards an Idiomatic Compositional Language for Biosignal Interfaces.” Unpublished PhD Thesis. Belfast, Northern Ireland: Queen’s University, 2010.

Patmore, David W. and R. Benjamin Knapp. “Towards an EOG-based Eye Tracker for Computer Control.” Proceedings of the 3rd International ACM Conference on Assistive Technologies (New York, USA, 1998), pp. 197–203.

Picard, Rosalind W. Affective Computing. Cambridge MA, USA: MIT Press. 1997.

Piccolino, Marco. “Animal Electricity and the Birth of Electrophysiology: The Legacy of Luigi Galvani.” Brain Research Bulletin 46/5 (1998), pp. 381–407.

Rilke, Rainer Maria. Where Silence Reigns: Selected Prose. New York: New Directions Publishing Corporation, 1978.

Rosenboom, David. “Extended Musical Interface with the Human Nervous System: Assessment and prospectus.” Leonardo 32/4 (August 1999), p. 257.

Siegmeister, Elie, Alvin Lucier and Mindy Lee. “Three Points of View.” The Musical Quarterly 65/2 (April 1979), pp. 281–295. ISSN 00274631. URL http://www.jstor.org.ezproxy. qub.ac.uk/stable/741709. ArticleType: primary article / Full publication date: Apr., 1979 / Copyright © 1979 Oxford University Press.

Sheer, Daniel E. “Sensory and Cognitive 40–Hz Event-Related Potentials: Behavioral correlates, brain functions and clinical application.” Brain Dynamics. Edited by E. Basar and T.H. Bullock. Springer series in Brain Dynamics vol. 2. Berlin/Heidelberg: Springer Verlag, 1989, pp. 339–374

Steriade, Mircea, Pierre Gloor, Rodolfo R. Llinas, Fernando H. Lopes da Silva and M. Marsel Mesulam. “Basic Mechanisms of Cerebral Rhythmic Activities.” Electroencephalography and Clinical Neurophysiology 76/6 (December 1990), pp. 481–508.

Swartz, Barbara E. and Eli S. Goldensohn. “Timeline of the History of EEG and associated fields.” Electroencephalography and Clinical Neurophysiology 106/2 (February 1998), pp. 173–176.

Tanaka, Atau. “Musical Technical Issues in Using Interactive Instrument Technology with Application to the BioMuse.” ICMC 1993. Proceedings of the International Computer Music Conference (Japan: Waseda University, 1993), pp. 124–126.

_____. “Sensor based Musical Instruments and Interactive Music.” In The Oxford Handbook of Computer Music. Edited by Roger T. Dean. Oxford, UK: Oxford University Press, 2009, pp. 233–257.

Tanaka, Atau and R. Benjamin Knapp. “Multimodal Interaction in Music using the Electromyogram and Relative Position Sensing.” NIME 2002. Proceedings of the 2nd International Conference on New Instruments for Musical Expression (Dublin: Media Lab Europe, 24–26 May 2002), pp. 1–6.

Teitelbaum, Richard. “In tune: Some early experiments in biofeedback music (1966–1974).” In Biofeedback and the Arts: Results of early experiments. Edited by David Rosenboom. Vancouver: Aesthetic Research Centre of Canada, 1974.

Valentinuzzi, Max E. Understanding the Human Machine: A Primer for bioengineering. Singapore: World Scientific Publishing Company, 2004.

Webster, John G. Medical Instrumentation-Application and Design. 3rd Editions. New York: John Wiley and Sons, 1997.

Wishart, Trevor. “Transforming Sounds: Confirming and Confounding Expectations.” Paper presented at the International Conference on Music and Emotion (Durham, UK: Durham University, 31 August – 3 September 2009.

Social top