[Gallery]

Stelarc

Also published in this issue of eContact! is “Fractal Flesh — Alternate Anatomical Architectures: Interview with Stelarc” by Guest Editor Marco Donnarumma.

Amplified Body

The amplified body performances were accompanied by the Third Hand, an EMG controlled mechanism. It had 290 degree wrist rotation (clockwise and counter-clockwise), a pinch-release and grasp-release actuated by muscle signals from the abdominal and leg muscles, allowing individual motion of the three hands. Thus some body signals were acoustically amplified whilst others were used as control signals for the prosthesis. Body signals ranging from microvolts to millivolts are picked up using electrodes and pre-amplified before going to a synthesizer or the switching mechanism of the Third Hand. Contact microphones on the Third Hand and the use of a digital delay pedal enabled sampling and looping sounds that sometimes synchronised and sometimes counterpointed the rhythmic beating of the heart and the intermittent firing of the muscle signals. The lighting installation of the performance flickered and flared to the fluctuating body signals.

Zombie

Chris Coe (Digital Primate) and Rainer Linz (Ontological Oscillators) accompanied the Prosthetic Head’s vocals for the original Humanoid CD which included six studio-recorded tracks. Humanoid: Fractal Remix, with the assistance of Cameron Jones, was created by printing fractal shapes onto the data surface. This was done at the Centre for Mathematical Modelling, Faculty of Engineering and Industrial Sciences, Swinburne University, Melbourne. Every CD behaves differently depending on the specific printed shape, its colour and location on the CD surface, as well as the user’s software system. The duration of each track will vary depending on the remix process. So depending on whether the CD is played on a PC, a Mac or an audio CD player, the tracks will be remixed with added pauses, sounds and reverb that were not in the original tracks.

Extended Arm

Whilst the choreography of the left arm was pre-programmed with 11 degrees of freedom (individual finger movements, arm flexion and extension, biceps and deltoids), the right arm was extended to primate proportions with an 11 degree of freedom mechanical manipulator. The image of the body with the extended arm was visually amplified by its shadow on the back wall. On the left hand side wall was projected the live video streaming on the website that included a 3D model of the hand that mimicked the movements of the actual mechanism. On the right hand side was projected the live video mixing of an “æsthetic surveillance system” of cameras that monitored the performance. An array of sensors acoustically registered the movements of the left arm, complimenting and counterpointing the pneumatic air sounds, solenoid clicks and mechanical finger clacking sounds. The audience was immersed in an acoustical space of an extended and involuntary body.

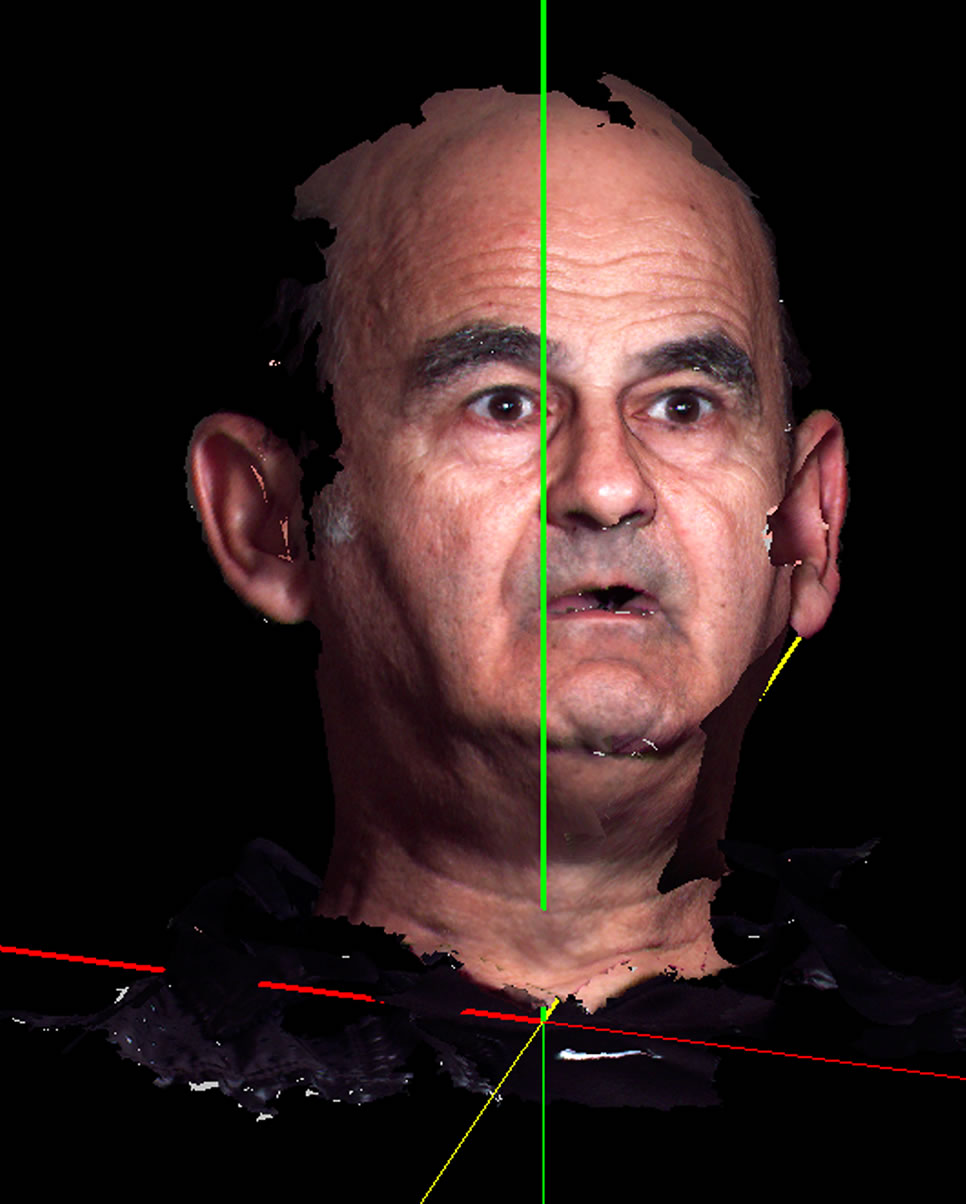

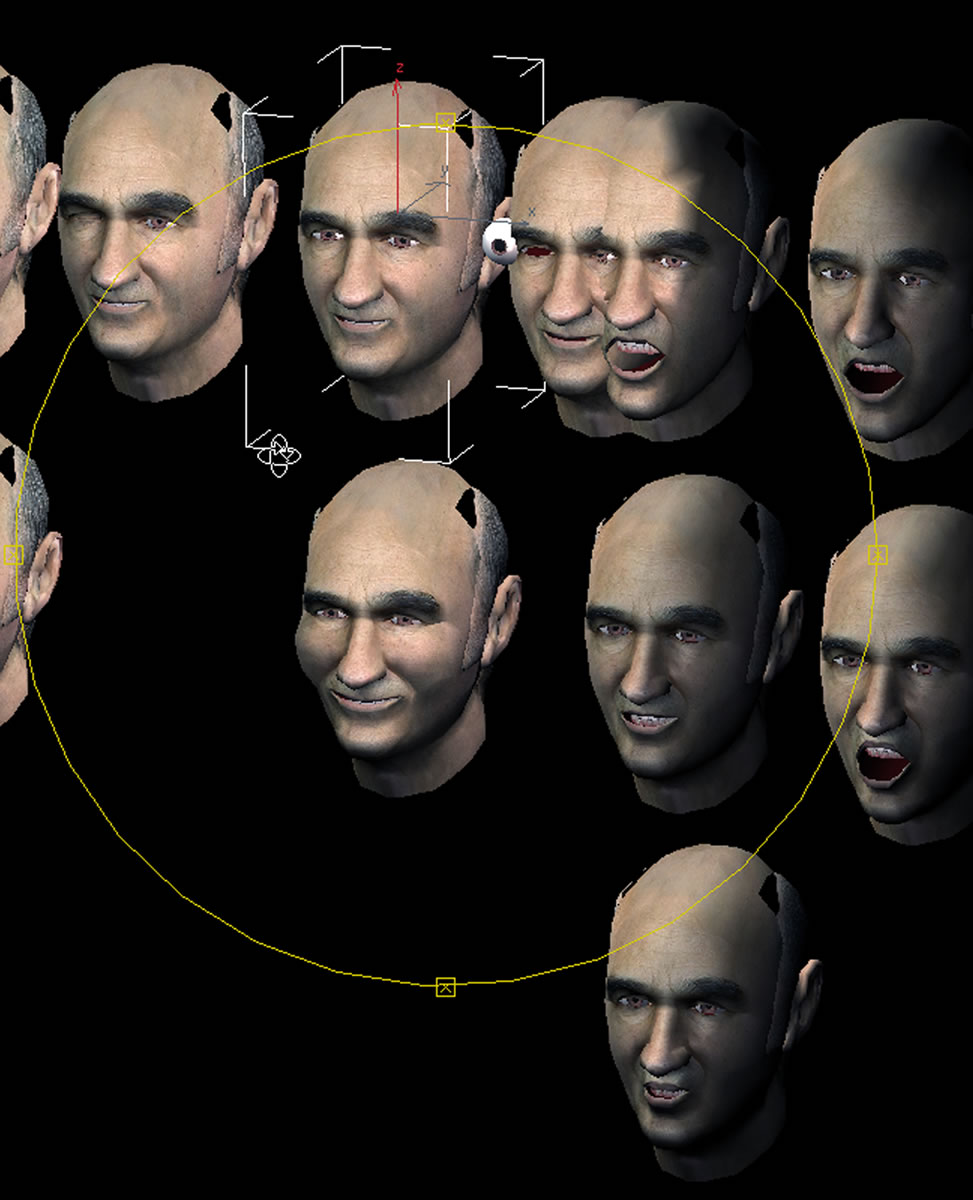

Prosthetic Head

The Prosthetic Head is an embodied conversational agent — an automated, animated, informed and reasonably intelligent head that speaks to the person who interrogates it. The 3D model is a 3000 polygon mesh, skinned with the artist’s face. The eyeballs, tongue and teeth are separate moving elements. It has a database and a conversational strategy that when coupled to a human head is capable of appropriate verbal exchanges. Facial expressions and emotions can be scripted. It can creatively compose and recite its own poetry-like verse and song-like sounds, which are different each time it is asked. The performance at BEAM was an improvisation and interplay of sounds between an artificial agent, prompted by the artist, and a vocalist and percussionist. The artist was also playing an iPhone app, generating sounds sometimes synchronizing, sometimes counterpointing the Prosthetic Head, that was projected on two large screens behind the artists.

Social top