Max/MSP vs. the iPhone

Two compositional approaches to the use of live electronics

Zellen-Linien

This 2007 composition is a twenty-minute solo piece that uses live-electronics to create a polyphonic relationship between the instrument and many electronic voices. The initial idea was to create a prepared piano, the preparation of which would change — sometimes from note to note. However, there is no physical preparation inside the piano; all the sound transformations are realized with Max/MSP.

Zellen-Linien is the result of approximately ten years of experimentation with the work-in-progress, Das Bleierne Klavier, which was a guided improvisation. The electronics for Zellen-Linien are organized into thirty-two passages, which I refer to as events. The pianist cycles through them with a MIDI sustain pedal positioned on the left side of the piano pedals. Two microphones are used to record certain passages and trace the instrument amplitude. Loudspeakers are placed around the public and create an extension of the instrument into the concert space. Sound movements are calculated in real-time using ambisonics.

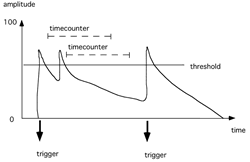

Amplitude Tracking

The basic link between the live part and the electronics is established by measuring the microphone signal amplitude. The signal of both microphones is initially mixed to a mono signal. It is then squared and passed through a low-pass filter. The resulting signal is compared to a threshold level, which is specific to each event. When the signal surpasses the threshold, a trigger is initiated. When the signal falls below the threshold, a timer starts. The signal needs to remain below the threshold for a set duration which is specific to each event. The low-pass filter, typically in the range of 1–30 Hz, regulates the responsiveness of the triggering mechanism: the higher the frequency, the faster the amplitude envelope will change. The timer helps to prevent inadvertent re-triggering, but can also be used as a compositional tool with which to isolate attacks within a dense piano passage. By varying these three parameters, (threshold value, low-pass filter frequency and duration) very different behaviours may be elicited.

Many events in the piece use two thresholds, one for softer and one for louder notes. As the envelope follower only sends trigger values, they can be used for a large variety of purposes.

Relative Envelope Follower

The actual amplitude of the signal depends on many factors, such as types of microphones used, their distance from the strings, gain levels on the mixer, send levels into the computer sound card and the dynamics produced by the pianist. These variables make it very difficult to set the threshold levels once with the expectation that they would work in any rehearsal and concert situation. Therefore, instead of working with absolute threshold values, the mean energy of the last 500 ms is calculated and the threshold acts as a positive offset to it. In this way, the computer analyzes dynamic changes, rather than absolute sound energy. For example, if, in a musical phrase, a trigger is calculated to occur on a mezzo forte note after a mezzo piano passage and the pianist plays the preceding passage already at mezzo forte he would naturally play the written mezzo forte note louder than the preceding notes. Capturing this increase in volume, using the relative offset, activates the trigger.

Events

Each event establishes a certain relationship between the piano and the electronics. The following treatments are used individually or in combination to create a multi-faceted network between the two media:

- Playback of short sounds upon detection of a threshold crossing (attack);

- Change of parameters in the real-time granular synthesis upon detection of a threshold crossing;

- Real-time recording of piano material for later playback;

- Real-time recording of piano material for granulation;

- Delay lines and back-and-forth, zigzag playback of live recorded material;

- Playback of prepared sound files;

- Reversed playback of live piano material;

- Recording of FFT frames and re-synthesis to freeze the piano sound.

Performers learn the type of interaction within each event very quickly, notably how their playing influences the reactions of the live electronics. I have also observed that performers are often more engaged with the electronics in this type of idiom than when they play with fixed media.

The Performance Patch

The Max/MSP patch has been converted into an autonomous application (a “stand-alone”) which does not require a license. There is also no need for additional libraries. The performance folder contains everything needed and has been tested on a number of different machines.

In order to run the patch, I incorporated the start-up routine on the left of the main window. When users work down the following list, they can adjust all the required variables to their environments:

- Initialization and preloading of sound files.

- Choice of sound card to be used, I/O and signal vector size.

- Choice of number of output channels (the lower right part of the patch will show the appropriate number of output VU’s for the chosen setup).

- Input and output routings according to the sound card.

- Test routine to check the correct routing of the speakers (the noise bursts should circulate in clockwise direction).

- Choice of MIDI port for the sustain pedal.

- Choice of polarity of the sustain pedal.

- Sound check — involves the setting of the microphone levels into the sound card. The check is done on measures 228–245. In this passage, sound files should be triggered when the pianist plays louder than pianissimo. Once the level is set, it will work for all events in the piece.

- Tuning of the Max patch to the pitch of the piano.

- Possibility to amplify the piano through the patch if no external mixer is used.

To prepare a certain event during the rehearsal process, choose the event number in the yellow part of the patch. The event can be switched on either with the next MIDI pedal or by pressing the space bar on the computer keyboard. One can also use the left and right arrow keys on the computer keyboard to advance and back up.

The envelope follower is displayed in the upper right part of the window. This unit traces the incoming piano sounds and sends the triggers. Everything is automated and no particular action is required during the performance. The VU-meters are in the lower part of the window. The input signals from the piano are on the left. They are followed by the eight internal sources: granular synthesis in “channels” 1–4, with delay on 1 and 2 and backwards playback on 3 and 4, sound file playback on 5 and 6, and buffer playback on 7 and 8. These eight layers are then spatialised. Depending on the version chosen (8-, 6- or 4-channel), the patch renders these movements accordingly.

Relationship of Piano and Electronics

The use of pure live electronics without prepared sound material has one compositional limitation insofar as the electronic sounds are always a result of a microphone input. Electronic sounds either occur at the same time as — or as a delayed version of — the instrumental part. I prefer to combine these techniques with prepared sound files, recorded in the studio, which can foreshadow musical material and make temporal links, a process that allows the instrument to become a “delay” of the electronics. For example, the chord progression in bars 295–325 at the end the piece is announced in the electronics in bar 8. Many other moments in Zellen-Linien make use of this compositional principle. Additionally, untreated sections of the piano part, as well as treated pre-recorded sounds, are played back at different points during the performance.

Synchronisation

As previously described, the electronics are controlled primarily by the envelope follower. The performer has a MIDI pedal, placed on the left side of the regular piano pedals, to cue the computer to start events or to advance the computer through the electronic score in preparation for a change. Events are not activated when the performer presses the MIDI pedal. Instead, the envelope follower is switched on and event activation happens with the next incoming note.

Spatialisation

Spatialisation is an important aspect of the piece. The unprocessed piano sound is amplified through the front two speakers, placed on either side of the instrument. Both offline and live processed elements are spatialised in predetermined trajectories in a circle around the audience, moving on the periphery (as opposed to crossing the sound field) in changing speeds. Instead of allocating specific speakers for events, spatial movement is specified by degrees of distribution. This means that the piece can be realized in versions with four, six or eight speakers. The stereo version provided (to the performer) is only to be used for rehearsals in smaller setups and is not to be used for performances.

Technical Requirements for Zellen-Linien

- grand piano with sostenuto pedal

- 2 microphones (condenser, if possible Neumann KM 184)

- MIDI sustain pedal

- device to translate the sustain pedal into MIDI (e.g. keyboard with sustain input)

- MIDI interface

- 8-channel sound card (e.g. RME Multiface or MOTU 828)

- mixer with 8 individual analogue outputs and 3 Aux sends

- 8 speakers, surrounding the public (+ amplifiers, stands etc.)

- small stage monitor for the pianist

- Apple Macintosh computer (Intel)

The piano should be amplified with two microphones: the microphone for the high register should be directed to the strings of the pitch F3 (small octave f: a fourth below middle-C) and the microphone for the low register should point to the middle of the low register. Both microphone signals can either be mixed in the console and then sent over one single Aux into the sound card (input 1) or sent to the sound card individually. The patch mixes them automatically to generate one single mono signal internally.

Electroacoustic space for this piece is best represented through an 8-channel diffusion around the public. Note that the channel assignment starts at the front left and then goes around clockwise.

The eight channels of the electronics are routed through the eight busses (groups) of the mixer to the eight loudspeakers. The output numbers of the soundcard correspond to the loudspeaker numbers. The speakers 1 and 2 are also used for the amplification of the piano. The pianist operates a MIDI sustain pedal to change the events. One needs a small keyboard to convert the MIDI sustain pedal data into MIDI messages. The mix of the two piano microphones is routed through Aux 1 and 2 to the inputs 1 and 2 of the soundcard. The stage monitor receives a mix of the electronics.

If an 8-channel diffusion system is not available, one can also perform the piece in a 6-channel or 4-channel version. However, the 4-channel version is not adequate for larger halls and does not render the movement patterns as well.

Conclusion

Many different pianists have played this composition over the past 2-1/2 years. Some of them I never met and I was not present at most of the performances. This seems to be a normal situation with pure instrumental music but it is rather rare in cases of live electronics (outside of large institutions like IRCAM). This is because, in most cases, the presence of the composer is required in order for the electronics to work. In view of this difficulty, I tried to provide an application and interface which are easily transferable to another computer and that allow for a very fast setup and sound check. The additional activities of the performer (pressing a MIDI pedal) are written as part of the musical phrases in order to integrate them as much as possible into the musical flow.

Nevertheless, acquiring the requisite technology and rehearsal conditions to be able to perform the piece remains a large endeavour.

Irrgärten

Irrgärten, which makes use of “easy electronics,” is an outgrowth of my experience with live electronics and instruments. This composition, for two pianos and electronics, uses two iPhones and four small loudspeakers placed inside the pianos to render the electronic part.

Unfortunately, when instrumentalists rehearse compositions with electronics, they often rehearse their parts like chamber music and do not accustom themselves to the electronic sound world, or to the interaction with it. The electronics are added at a very late point in the process, often only days before the concert and the musicians have no time to adapt their interpretation to this new situation.

My desire to avoid returning to a pre-produced CD track, which causes many problems in terms of synchronization with the instrumental part and forces the musicians into a rigid timeframe, was the impetus to experiment with the capacity of the iPhone. The electronic sounds are short sequences, which are re-synchronized to the live part using amplitude tracking with the build-in microphone.

This setup is, indeed, very limited compared to live-processing with Max/MSP or other computer software. However, I wanted to see how far I could go, musically, with such a sparse configuration that avoids microphones, sound cards and computers all together.

Where Zellen-Linien explores the interplay of the solo instrument and its electronic counterpart, in Irrgärten I try to establish several layers of relationships: these exist between the two instrumental parts as well as between each piano and its own electronic “shadow”. The sounds of the electronics are, for the most part, recorded piano phrases and they create a second voice within each instrument.

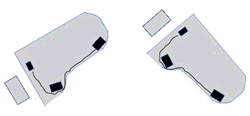

Easy Setup

There is no need for loudspeakers in the concert hall. The electronics are played back through four small, high quality monitors, two of which are placed inside each piano. The monitors face the piano lid, which acts as a sound reflector and they are placed as far apart as possible from each other. For example, one monitor is placed close to the keyboard in the highest register and the other one is close to the far end of the low strings (Fig. 5). The electronic sounds should blend as much as possible with the instrumental sounds. The mini-jack output of each iPhone is connected to the monitors inside the piano. The sound files from each iPhone are in stereo. I hope the setup more easily enables musicians to rehearse with the electronics and allows them to prepare the installation for a performance without a sound technician.

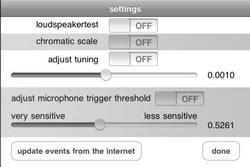

The iPhone Interface

There are two separate applications — one for each piano (Irrgaerten-1 and Irrgaerten-2), downloadable from the iTunes AppStore. The settings interface (Fig. 6) looks the same on both applications, but the electronic scores (the events) and the sound files are different. To start a rehearsal, one presses the “settings” button on the main interface.

The “loudspeaker test” is used to ensure that the two channels are connected to the corresponding monitor inside the piano. The “chromatic scale” plays back a chromatic scale from the lowest to the highest piano note and serves to set the general level on the monitors (7 on the rotary knob for the Fostex Monitors is a good reference). The output volume of the iPhone should be set to maximum. This sound file can also be used to determine the sound quality of the loudspeakers in cases where the Fostex monitors are not available. The sound should be as natural as possible and evoke the impression that the real piano is playing. The use of small computer or game loudspeakers is not recommended, as they are unable to reproduce the sound quality of a piano.

The “adjust tuning” feature will play a repeated A4 note at 440 Hz. The iPhone tuning can be adjusted to the tuning of the piano with the slider below the switch. The new tuning value is stored in the preferences of the application and is ready to be used. There is, thus, no need to go back to the tuning procedure between rehearsal and concert. OpenAL, the lowest level of sound playback within the iPhone API, allows the tuning feature to be accessed.

Using the iPhone as Contact Microphone

Because the various iPhone/iPod models have different built-in microphones, the input signal varies from model to model. The overall sensitivity is too high, which results in false triggers when the respective other piano is playing loud notes. The solution to that is covering the built-in microphones with sticky tape and taping the iPhones to the piano music stand. That way each iPhone is only picking up the notes from the own instrument. The sensitivity value of 0.99 (less sensitive) worked best for the iPod (fourth generation) but this value may vary depending on the used piano. To adjust the threshold one uses the function “adjust microphone trigger threshold.” Each note played on the piano with the dynamic forte should gate two consecutive chords in the electronics part. However, the second chord in the electronics part should not “auto-trigger” another sound file. If that happens, the microphone is set too sensitively. The sensitivity value is stored in the preferences of the application and used from then on.

Update Events from the Internet

The electronic score is stored as a database on the device independent of the sound files. Each event is marked in the musical score with a number. The numbers are different for each piano because the sound files are not always triggered at the same time. Each event contains the information as to which sound file to play, as well as data about volume and durations. If an update (corrections) to these electronic scores becomes necessary, I shall upload a new database onto a specific html page. The “update events from the internet” button can be used to download the updated version. The location of this updated file is known to the application, thus no further information is necessary.

Performance Interface

The large rectangular area on the top of the screen of the performance interface (Fig. 7) is the progress bar. It gives a visual feedback, for example, how far along one is within any given event. After an event has begun, the progress bar scrolls from left to right.

When the progress bar reaches the right edge, the event is finished. Most often, the display then presents a “green” progress bar. This indicates that the iPhone has activated the internal microphone and is waiting (listening) for the next note to be played. The pianist sees the indication, “green,” for such moments in the instrumental score (Fig. 8).

When the pianist articulates the next note, the iPhone automatically starts the next event and thus plays synchronously with the piano. This gives musicians some freedom for rubato and interpretation. Nonetheless, the metronome indications in the score should be performed as accurately as possible. Additionally, there are several places where the pianist is required to touch the button “touch” on the lower left corner of the interface to switch the listening feature back on (marked with the term “touch” in the score). If the following event requires this manual “touch” action, the progress bar of the current event shows in “red” to remind the player of the upcoming “touch” action. Once the previous event has terminated and the iPhone is ready for the touch action, the progress bar turns “pink.”

To navigate to a different event during the rehearsal process, the pianist must press the “prepare” button, scroll to the desired event number and select it from the menu. The application returns automatically to the main screen and the small number on the right side of the “prepare” button indicates the prepared event number. The pianist activates an event by pressing the “touch” button. After the pianist presses “touch”, the progress bar advances while the sound files preload to give the pianist time to prepare to play before the event is activated. The pianist can also use the “prev” and “next” buttons to navigate to adjacent events during the rehearsal process. “Stop” stops playback and should not be used during a performance.

The Electronic Score within the iPhone Application

The database contains information about the name of the current sound file, its length, the trigger threshold for the microphone, the duration for the progress bar and its colour for each event. The sound files are divided into two groups: streaming and buffer sounds. The streaming sounds are read from the hard disk; only the first two seconds are preloaded into memory. During playback, samples are constantly loaded into three circular buffers in order to maintain enough data in memory for gapless playback. Such preloading is necessary in order to start the sound files at the very moment the microphone level crosses the programmed threshold.

Certain events (for example: piano 1, event 49) do not simply play one single sound file. For the entire duration of the event, the microphone detects loud notes in the piano part and triggers short sound files. In these sections, the rendering of the electronics may vary from performance to performance, depending on the interpretation. The progress bar is “blue” for these events.

Disposition of the Pianos on Stage

In order for the piano lids to reflect the electronic sounds into the concert hall, they must be opened to their maximum height and face the audience. The proposed stage arrangement (Fig. 9) allows for this and enables the pianists to communicate visually with each other.

Technical Requirements for Irrgärten

- two iPhone devices (possible models iPhone 3GS, iPhone 4, iPod touch 4th generation)

- four small, high quality active monitors: two inside each piano, placed on a pieces of foam

- two cables to connect the output of the iPhones to the monitors

- power supplies to both pianos for the powered monitors

Conclusion

While the easier technical environment should facilitate the rehearsal process and also the concert preparation, the compositional possibilities with the electronics are much more limited than those in Zellen-Linien. Though the streamlined technical setup used in Irrgärten facilitates both the rehearsal process and concert preparation, the electronics used in this piece afford fewer compositional possibilities than do those used in Zellen-Linien.

Social top