Hive

An audio programming language informed by the theory of cognitive dimensions

Society is characterized and controlled by code technologies, and yet participation and engagement with code is far from universal (Margolis 2008). Understanding programming and code is a critical part of our modern society (Brennan 2013). By leveraging artistic interest, audio programming promises to narrow this digital divide. The principle goal of my research was to make audio programming languages more inclusive. The project began by addressing the following questions: How can we make audio programming simpler for novice users? How can we teach audio programming more effectively? How can audio programming provide a foundation for broader coding practices?

In answering these questions I carried out a comparative analysis of audio programming languages in terms of Thomas Green’s theory of cognitive dimensions (Green 1996). Informed by this analysis I created Hive, a small audio programming language, which leverages the existing SuperCollider language (McCartney 1996).

Why Audio Programming?

Audio programming can expose people to working with code in creative ways and by doing so may lead to an increased interest in working with code among the general population. Recent research in the arts and humanities has emphasized considerations of accessibility and inclusiveness in connection with digital media programming languages. Sonic Pi (Aaron and Blackwell 2013) facilitates engagement among students by using live coding (Collins et al. 2003) and is built upon the educationally targeted Raspberry Pi boards. The Processing environment has been rapidly adopted by a wide community of artists, designers and media arts educators (Shiffman 2008). The ixi lang live coding environment was designed for simplicity and represents events and patterns spatially, thus merging musical code and musical scores (Magnusson 2011). Both ixi lang and the recent Overtone language (Aaron and Blackwell 2013) are built upon the existing SuperCollider language. More recently there have been developments in regards to web audio, with the creation of the new audio element of HTML5 (Choi and Berger 2013). Each of these different programming languages and interfaces have demonstrated the growing interest in developing new kinds of creative coding languages for people to work with. These recent initiatives show us is that there is an interest in developing accessible and educational audio programming languages. Ultimately audio programming can provide a creative foundation to begin working with code, thus allowing users to explore broader aspects of programming and hopefully computer science as a whole.

Hive: A Mini Audio Programming Language

The System Parameters

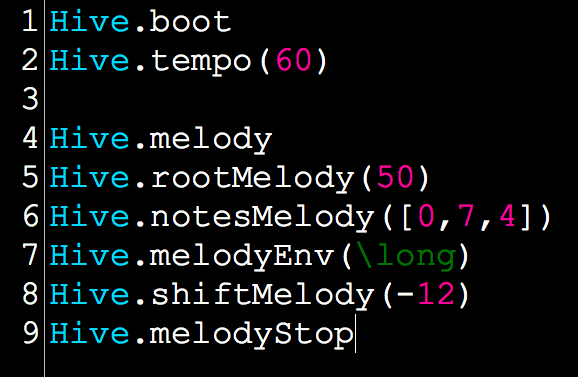

Hive is a mini audio programming language (Fig. 1) built over SuperCollider that is meant to be a platform language for novice users to begin learning about musical programming and live coding. The programming environment allows users to learn about keys, pitches, relative pitch relations, harmony, notes and tempo. While working with the language, users are shielded from audio programming directly. For example, users do not need to name a sine wave oscillator or make a graph of unit generators, but they do have the ability to change and choose the envelopes of preset instruments, the root of those instruments and the notes being played. Hive exposes intervals, tempo and layering as musical variables that people can play with. From a coding standpoint, users become comfortable with writing function and method calls, and thus are able to change parameters of those calls. The system ability of Hive allows users to manage four layers of sound. These four layers are essentially executed by booting four preset instruments: melody, harmony, bass and texture. A number of parameters are individually controllable by the user, making it possible to:

- Specify sequences of notes in relative pitch notation;

- Change the root of the sequence of pitches;

- Change the tempo of a sequence or region;

- Shift the root of a sequence of pitches up or down;

- Stop and start all instruments.

Users can also add and change the envelope of each instrument based on five presets with specified attack and release times. The different envelopes users can choose from change the way an instrument sounds. For example, the long envelope extends the duration of the notes, while the short envelope shortens the duration. Rev acts as a reverse envelope and plays the sounds backwards, while tri imitates a triangle wave oscillator and perc imitates a percussion instrument.

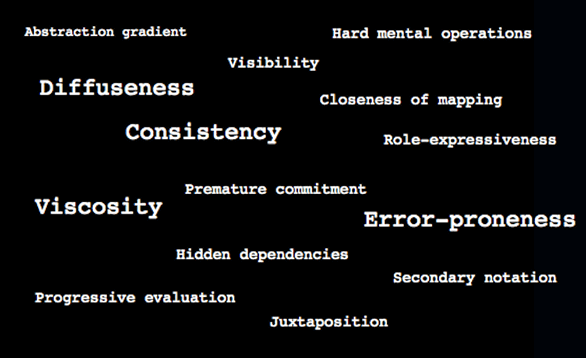

A Series of Dimensions

While working on the design parameters of Hive, Thomas Green’s 14 cognitive dimensions (Fig. 2) were always kept in mind. Although Green’s research focused on visual programming languages, it provided a way to compare and contrast existing audio programming languages. Green uses his 14 dimensions to provide a guide to designing new programming languages, as well as comparing languages. The cognitive dimensions are focused more on the design quality of notations, user interfaces and the programming language, rather than giving a very detailed description of each. In his research Green has spent a great deal of time exploring different design manœuvres; these are simply changes intended to improve the design of a language in some way, or the design of one of the 14 dimensions. What we may come to understand, and what I came to understand with my own research and in the creation of Hive, is that there may be a trade-off in the usability of each dimension. For example, a language can have a very low error proneness, such that it is difficult for users to run into errors, but the language can also have a very high rate of hidden dependencies, meaning changes in one area lead to changes in another without the users’ awareness or understanding.

Green’s research helped me articulate certain design manœuvres and led me to focus on ensuring Hive remains an accessible and user-friendly language. The main objective for my language was to create a tool that had the ability to teach users not only about simple audio programming, but also about live coding and musical performance. Out of the 14 cognitive dimensions I choose to focus my attention on four: diffuseness, consistency, viscosity and error-proneness. I hypothesized that these four dimensions would help me reach my goals for Hive in terms of its accessibility and design.

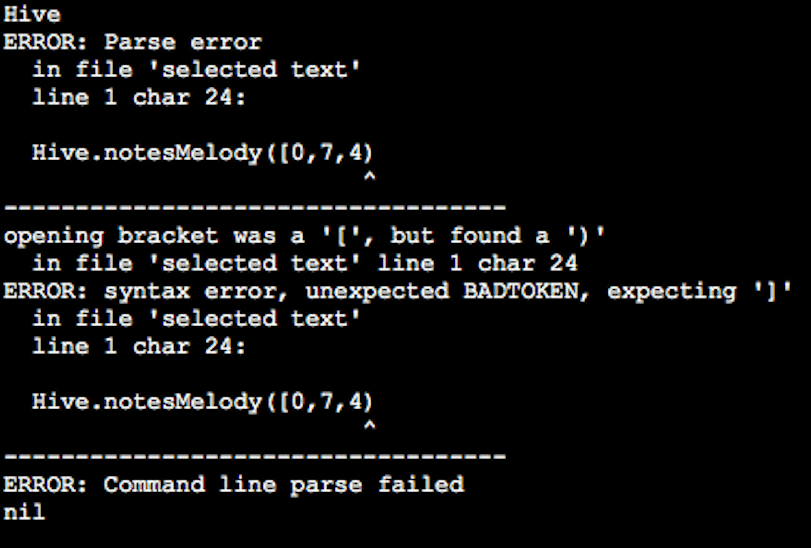

Diffuseness looks at how many symbols or graphic entities are required to express a meaning; in other words, how much writing is required and how much space is taken up to express a meaning. I designed Hive to have a low diffuseness, in order to make a noticeable change or action possible with very little writing. I designed each parameter and all four instruments in Hive to work in the exact same way. This means that all of the code parameters are virtual, the only differences being the instrument names and, of course, the way each of the four instruments sound. Consistency focuses on how much of the language can be successfully inferred once some of its notation has been learned. To begin to programme in Hive, users only need to learn the main components of the language, since all of the code is virtually identical — it is therefore very easy for users to work with the syntax. I wanted Hive to have a simple consistency so that it would be easy for users to begin producing music right away, rather than worry about learning and working through the syntax. Viscosity focuses on how much effort is required to produce a single change in the notation. I created Hive to have a low viscosity so that it was easier for users to produce a single character change. A single character change in Hive may often result in a direct change in the way an instrument could sound. The last dimension I chose to focus on was error-proneness, which asks how easily the notation of a language can produce mistakes. I wanted Hive to have a very low error-proneness and so I took advantage of SuperCollider’s “post window” in order communicate errors to users (Fig. 3).

Simple syntax design and consistency also reduces the chance of written errors, since users get used to the formatting of the notation. Hive was created to simplify SuperCollider’s standard notation by concealing and revealing certain aspects of code in order to make it easier for users to work with.

Conclusion

Audio programming has the ability to provide a creative foundation for users to begin working with code. Ultimately this may result in users exploring broader aspects of programming and computer science. Ultimately Hive was meant to be a simple and accessible language for users to begin to learn about audio programming, live coding and musical performance. Given recent research initiatives in producing accessible and educational audio programming languages, I imagine that these creative educational outlets for programming will only continue to grow and embed themselves into our everyday society.

Acknowledgements

I would like to express my deepest appreciation for the USRA at McMaster University, in addition to the Faculty of Humanities and the Communication Studies and Multimedia department at McMaster. A special thanks also to Dr. David Ogborn, who has overseen this research project.

Bibliography

Aaron, Samuel and Alan Blackwell. “From Sonic Pi to Overtone: Creative experiences with domain-specific and functional languages.” FARM ’13. Proceedings of the 1st ACM SIGPLAN Workshop on Functional Art, Music, Modelling & Design (Boston MA, USA: 28 September 2013), pp. 35–46.

Brennan, Karen. “Best of Both Worlds: Issues of Structure and Agency In Computation Creation, In and Out of School.” Unpublished doctoral dissertation, Massachusetts Institute of Technology, 2013.

Choi, Honhchan and Jonathan Berger. “Waax: Web audio api extension.” NIME 2013. Proceedings of the 13th International Conference on New Interfaces for Musical Expression (KAIST — Korea Advanced Institute of Science and Technology, Daejeon and Seoul, South Korea, 27–30 May 2013), pp. 499–502.

Collins, Nick, Alex McLean, Julian Rohrhuber and Adrian Ward. “Live Coding in Laptop Performance.” Organised Sound 8/3 (December 2003), pp. 321–330.

Green, Thomas and Marian Petre. “Usability Analysis of Visual Programming Environments: A “Cognitive dimensions” framework.” Journal of Visual Languages and Computing 7/2 (January 1996), pp. 131–174.

Magnusson, Thor. “The ixi lang: A SuperCollider parasite for live coding.” ICMC 2011: “innovation : interaction : imagination”. Proceedings of the 2011 International Computer Music Conference (Huddersfield, UK: CeReNeM — Centre for Research in New Music at the University of Huddersfield, 31 July – 5 August 2011), pp. 503–506.

Margolis, Jane. Stuck in the Shallow End: Education, race and computing. Cambridge MA: MIT Press, 2008.

McCartney, James. “SuperCollider: A New real-time synthesis language.” ICMC 1996. Proceedings of the International Computer Music Conference (China: Hong Kong University of Science and Technology, 1996), pp. 257–258.

Shiffman, Daniel. Learning Processing: A Beginner’s guide to programming images, animation and interaction. Morgan Kaufmann Series in Computer Graphics. Amsterdam: Morgan Kaufmann / Elsevier, 2008.

Social top