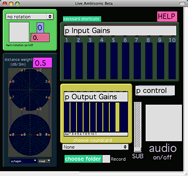

Live Ambisonic Beta

a design for quick and evocative live spatialization of multiple inputs

This paper continues research outlined in “The ‘Beast’ that is Live Spatialization,” presented at the Toronto Electroacoustic Symposium in 2007 and published in eContact! 10.3 — TES 2007 (May 2008).

Abstract

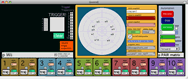

This paper presents the software Live Ambisonic Beta created by Carey Dodge and released in August 2009. This software allows for quick and evocative live spatialization of multiple inputs from a recordings or live source. The focus in the creation of the software is interaction design. It uses a single monitor interface, mouse, keyboard and an optional Wii remote. The software was created using Max/MSP 4.6.3 and third party externals. This paper will discuss the technical and theoretical concepts used to create the application, followed by some practical examples. With the presentation of this free software, the author hopes to contribute to more accessible complex spatialization techniques for electroacousticians, musicians and anyone with an interest in sound.

The Software

At the core of Live Ambisonics Beta there is the Ambisonics Equivalent Panning technology created byMartin Neukom and Jan C. Schacher of the Institute for Computer Music and Sound Technology (ICST) at the Zurich University for the Arts. Details about their work can be read in their paper “Ambisonics Equivalent Panning,” presented at ICMC 2007. This technology is more efficient then other Ambisonic technologies. Also, its integration into Max/MSP makes it very malleable. This is key for live performance where real-time optimization is essential. Live Ambisonic Beta has been used in performances and rehearsal with up to 10 live inputs, 8 outputs, automation and live control on a Macbook Pro (2.2 GHz Intel Core Duo processor, 2 GB of SDRAM) with the MOTU 896 interface.

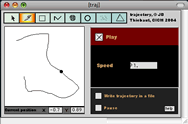

Another third-party external used in Live Ambisonics Beta is the trajectory object by Jean-Baptiste Thiebaut, available on his website. This object automates various trajectories on an xy plane such as, circles, triangles, random and drawn.

Live Ambisonics Beta combines these two technologies with extensive recording and triggering capabilities and a quick user interface.

The Interface

I stripped down the interface to the essentials of what I believe to be necessary and useful for live spatialization. There are minimal extra features. This was done for two reasons:

- Processing efficiency. Live processing is always more demanding then non-real-time processing. It is essential to have a robust system that will not crash during performance. One can automate trajectories, move multiple inputs simultaneously, etc. but there is no Doppler effect, for example. Also, the Ambisonic Equivalent Panning technology used is much more efficient than traditional Ambisonics.

- Quickness of use. After paring the software down to the essentials, the next step was making everything easily accessible. As much as possible, all aspects of the software are on the “front-end” or one-click/keyboard shortcut away. That is, almost all of the features of the software are accessible from the two main windows. The hidden windows are mostly used to setup input levels and other parameters that are left alone during performance. Usually only one window (“control”) is used during performance.

Why no External Interface?

Through experience, I have found that incorporating an external interface into Live Ambisonic Beta adds needless complexity. The main purpose of this software is for quick evocative live spatialization during rehearsal, multi-channel sound reinforcement or a speedily put together performance. Much of the philosophy behind this software is minimalism. Relying on external gear takes too much time in most time-crunched rehearsal schedules. Often, with external gear, one needs to calibrate it for it to work well. Also, I did not want to make the software specific or dependent on an external device. Finally, external gear takes up space. For my purposes, I found less desk space and less things to look at was more efficient and useful over all. A mouse and keyboard are all I need.

There is Wii capability incorporated into this software but I have not found it useful other than using it for rotating the entire sound space. One should feel free to experiment with this. Adding MIDI control or other communication to external interfaces is not complicated for anyone familiar with Max/MSP.

Live Ambisonics Beta in Practice

The embryonic version of Live Ambisonic Beta was first used during a one week workshop with Boca del Lupo Theatre. During this workshop we were experimenting with various spatialization options for an upcoming show. Using this simplified software was essential for quick prototyping of spatialization ideas and presenting various ideas to collaborators both familiar and unfamiliar with sound spatialization.

The first use of Live Ambisonic Beta in its current form was during the Deep Wireless Conference in May of 2009. It was used with the Deep Wireless Ensemble which created four 10-minute performances in one week. These performances included four performers, live sound, live mic/instrument input and recorded sound. Live Ambisonic Beta was extremely useful in this context where ideas were bounced around very quickly, rehearsals jumped from one piece to another hourly and the time between creation and performance was so compressed.

Live Ambisonic Beta was also used for multi-channel sound reinforcement during the 2009 Deep Wireless Conference. This involved everything from one person presentations to panel discussions to concerts with one or more musicians. Again, the simplicity of Live Ambisonic Beta was essential in offering the audience quality sound reinforcement with the added excitement of diffusion and spatialization of the live sound.

The Future

Live Ambisonic Beta (or a version thereof) will be used in the upcoming performance An Imaginary Look by Boca del Lupo Theatre. The premiere will be at the Harbourfront Centre in Toronto in the fall of 2010.

A Max5 version is underway and I will upload it to my website soon. Hopefully before 2010.

A request came up during TES 2009 for VBAP (Vector Base Amplitude Panning) instead of or in addition to the ambisonics used in the patch. This could be added to the patch but due to time constraints. I will not be adding it to this version, but may do so in the future.

Bibliography

Neukom, Martin and Jan C. Schacher. “Ambisonics Equivalent Panning.” Proceedings of the International Computer Music Conference 2007. Copenhagen, August 2007. Available on the Institute for Computer Music and Sound Technology website at http://www.icst.net/research/projects/ambisonics-tools

Thiebaut, Jean-Baptiste. A Graphical Interface for Trajectory Design and Musical Purposes. Actes des Journées d'Informatique Musicales 2005 (JIM 05), Saint Denis, France, 2–4 June 2005. Available on the author´s website at http://jbthiebaut.free.fr/download/thiebaut_trajectory.pdf

Social top