Three Electroacoustic Artworks Exposing Digital Networks

Networks are important and inseparable from our lives. They are powerful tools for organization and control, used for file storage and retrieval, political demonstration, publishing, gaming and so much more (Galloway 2007). It is pertinent for us to understand our role in these network politics, yet it is becoming more difficult to do so as they are concealed in ever more opaque technological “black boxes”.

Digital technologies may bring about a profound ignorance in users. For example, graphical user interfaces (GUIs), a defining feature of many widely distributed softwares, offer the convenience of becoming an expert computer user without understanding the inner workings of one (Chun 2011). GUIs abstract the dense networks of code that house and respond to a user’s decisions, presenting an intuitive, accessible interface (Kittler 1995; Franke 2010). These abstractions represent black boxes, and apply not only to casual computer users but also to programmers, as abstraction is a fundamental aspect of code design. The former group experiences a “hard black box” that they are unable to open. For the latter they are “soft black boxes” which are often, at least in the open source community, reversible by all. It seems everyone who uses a computer is navigating levels of these abstractions, opening and closing black boxes.

My research opened some of these black boxes while following the “hacker ethic: a commitment to information freedom, a mistrust of authority, a heightened dedication to meritocracy, and the firm belief that computers can be the basis for beauty and a better world” (Coleman 2013). I produced three artworks around a central research question: What functional and æsthetic aspects of network technologies can be exploited by contemporary media artists to create immersive environments that inspire interpretation of the nature and ubiquity of digital networks?

The study followed research-creation methodologies of self-reflexive planning, creation and documentation (Kock 2014; Chapman and Sawchuck 2012). The three artworks — Shared Memory-Paired Action, Revolution2, and DACADC — are situated within the broad umbrella category of electroacoustic music and art, reflecting both my experience as a sound artist and the rich history of music being woven into the fabric of computer science and design. Electroacoustics is a distinct approach to making music that was first exemplified by the use of electrical instruments for live performance, then by specialized studio techniques and now by the use of computers in both the studio and live performance (Keane 2000). Electroacoustic practice has become a powerful tool for researching facets of performance from both musical and technological standpoints (Guérin 2006). Now that powerful, inexpensive computers are common, musicians are taking advantage of æsthetic developments that only the computer can facilitate like algorithmic composition (Essl 2007; Edwards 2011; Mercier 2001), live coding (Collins et al. 2003; Brown and Sorensen 2009) and networked music (Chew et al. 2004; Weinberg 2005).

As computer music, these works necessarily incorporate software and code into their design, following a tradition of software art that questions our black box understanding of computers, seeking ways to transform banal machines into resistant social actors through contradiction and physical limitations (for example, Forkbomb), or by explicitly poking fun at the cultural homogenization that is reinforced by proprietary software providers (for example, Autoshop and Autoillustrator) [Chun 2011]. In Forkbomb, Autoshop and Autoillustrator, computer code is treated not merely as a tool, but as an object of investigation itself.

“Shared Memory-Paired Action”

Shared Memory-Paired Action is an interactive, telematic audiovisual artwork that combined two distinct geographic spaces. In its first mounting, it involved two art galleries in the city of Hamilton, Ontario. Audience-as-participants interacted with a sculptural interface to create data. This was shared over the Internet, which was then used to telematically link the two spaces, allowing for real-time conversational manipulation of audiovisual stimuli. Monitored on two screens in both the Hamilton Audio Visual Node (HAVN) and the Factory Media Centre (FMC), half of an algorithmic audiovisual composition unfolded according to input from the local space with the other half according to input from the disparate space. The work is a product of the interaction between participants (and participants) and the system, a common feature of telematic artworks (Ascott 1990).

The work was inspired by the observable disparity in cultural cachet between the two neighbourhoods surrounding the FMC and HAVN. Whereas the former belongs to the James Street North (Jamesville neighbourhood) art district with galleries and boutiques, HAVN lies just outside of this burgeoning scene in the Beasley neighbourhood, which boasts higher-than-city-average poverty rates and a proportion of residents with no completed education (Social Planning and Research Council of Hamilton 2011 and 2012). Anecdotally, HAVN seems to be an uninviting pedestrian experience for some gallery-goers.

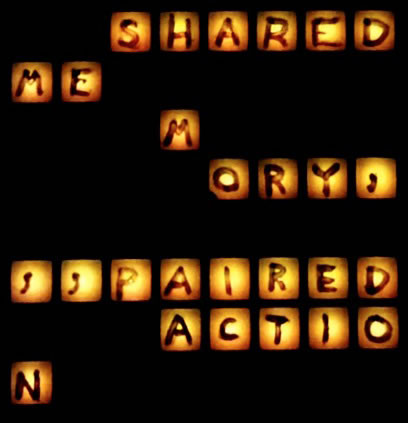

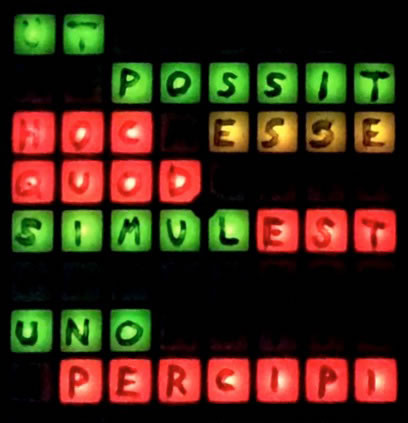

Unique and ephemeral audiovisual environments belong to a tradition that dates back to kinetic light performances (Zdeněk Pešánek, b. 1896) and early “visual music” works (Oskar Fischinger, b. 1900), and is continued by contemporary network artists such as Christiane Paul, Nam June Paik and Gordon Pask. The visual component of Shared Memory-Paired Action was comprised of two projected video screens in each space. At rest, with no participant interaction, the screens were black. A Max programme waits for participant interaction with the sculptural interface, a Novation Launchpad (a 64-button grid MIDI controller with programmable LEDs) modified with evocative Latin phrases that translate to: “to be is to be perceived” and “there are ways to be together” (Figs. 1–2).

Pressing a button instigates a cascade of video cells that reveal discrete areas of an image out of the blackness. This cellular cascade is governed by John Conway’s Game of Life, with successive generations propagating according to a coded metronome. A video cell was revealed (alive) or concealed (dead) according to the Game of Life rules (Gardner 1970):

- Any live cell with fewer than two live neighbours dies, as if caused by under-population.

- Any live cell with two or three live neighbours lives on to the next generation.

- Any live cell with more than three live neighbours dies, as if by overcrowding.

- Any dead cell with exactly three live neighbours becomes a live cell, as if by reproduction.

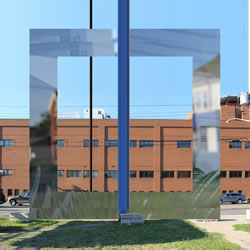

Conway’s Game of Life has a long tradition in computer art because of the interesting patterns it yields with simple input (Synthhead 2008; Blow 2010). In fact, the Max programming environment includes an object that performs the game’s algorithm. A complex mapping deciphers the period of participants’ button presses and, based on this data, decides which of four images is “revealed” for local presentation (Figs. 3–6).

Meanwhile, the musical component was governed entirely by an independent mapping of participants’ input to sonic output. Interactions were first deciphered in Max to determine the identity and timing of each event. Single parameter note events were not mapped one-to-one to button presses. Instead, a compelling experience was achieved by abstracting and remapping participants’ data onto multi-parametric effects. The result is a dynamic, coherent emergence of a cacophonous texture out of a slowly transforming one. The sonic character was inspired by the notion of “Klangfarbenmelodie”, or tone-colour-melody. Complex parameter-mapping is common in virtuosic digital instrument controller design, though I’ve designed this work keeping Wessel and Wright’s (2002) “low entry fee” in mind: an intuitive, easy-to-use system that new and inexperienced users can instantly manipulate in a meaningful way.

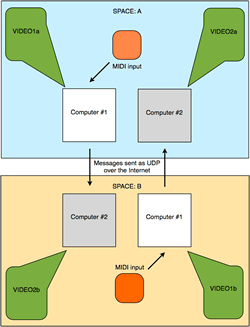

In Shared Memory-Paired Action, the two gallery spaces (HAVN and the Factory Media Centre) are linked over the Internet and share information using the User Datagram Protocol (UDP) [Fig. 7].

When a user presses a button in one space, the input is analysed and manipulates VIDEO1a and VIDEO1b locally. Concurrently, this analysed input is sent from computer #1 in the local space to computer #2 in the paired space by Open Sound Control (OSC) compatible UDP packets, containing simply numbers representing input information. These numbers will instigate the same cascade pattern in VIDEO1a and VIDEO1b (local) for VIDEO2a and VIDEO2b (remote). UDP communication is facilitated by the Max udpsend and udpreceive objects, which can transmit messages over a network to a specific address and port. In the present case, UDP datagrams are forwarded through consumer-level networking hardware to create a Metropolitan Area Network (Morreale and Campbell 1990).

Telematics in Practice

Telematic theory suggests we do not have to think, see or feel in isolation. Creativity can be shared and authorship distributed among individuals in diverse locations. The earliest telematic artworks were facilitated by telephone (László Moholy-Nagy’s Telephone Pictures [1923]), exploring the notion of isolated artists and the nature of collaboration. Telephone networks also supported robotic teleaction in which participants acted in one space to elicit a robotic effect in a paired space (Eduardo Kac and Ed Bennett’s telephone-networked Ornitorrinco Project [1989] and Telegarden [1995]). Some telematic artworks create novel gallery realities using remote scientific data (Knowbotic’s Dialogue with the Knowbotic South [1994]), while others bring disparate participants together, allowing audiences to meet with visitors of a paired space (like Maurice Benayoun’s The Tunnel Under the Atlantic [1995]). Modern computers can create immersive virtual environments with audiovisual screens that, with the Internet’s fast data transfer rates, support nearly immediate digital interaction (Michael Dessen’s telematic music performances since 2007, Paul Sermon’s Telematic Dreaming [1992]). Digital networks have supported participatory telepresent collaboration for some time, notably Olivier Auber’s Poietic Generator (1987) and Roy Ascott’s La Plissure du Texte (1980). These artworks championed the idea of distributed authorship, in which geographically disparate artists can manipulate graphical or textual elements in real time together.

Following Delahunt’s definition of installation art as “made for a specific space exploiting certain qualities of that space” (2007), Shared Memory-Paired Action must change to suit its exhibition location. In this inevitable translation of the work to suit new spaces, an interesting artifact emerges as a technical obstacle: commercial networking technologies’ firewalls and their potentially restrictive effect on direct communication between computers. This practical issue reflects contemporary patterns of commercial Internet use. In 1969, the Advanced Research Projects Agency (ARPA) created ARPAnet, an early version of the Internet that communicated using packet-switching technology (Galloway and Thacker 2007). ARPAnet was a decentralized network, so computer storage was distributed. Throughout the latter part of the 20th century, the evolving Internet typically functioned in this way. Perhaps this has fuelled the misconception that the Internet is an “uncontrollable network” (Ibid.).

The Internet landscape has changed in recent years. Most Internet communications are now channelled through few corporate institutions. These institutions have introduced a convenience with massive cloud servers hosting a wealth of knowledge that no peer-to-peer sharing agreement could provide. Because users regularly send requests out to large centralized services, typical firewalls block inbound connections. These typical firewalls are ubiquitous in homes and small offices and present a challenge if one’s intention is to make peer-to-peer connections. In the case of UDP protocol, the routers on both ends of the peer-to-peer connection must be configured to forward inbound packets to a specific computer. This barrier to communication seems to suggest that peer-to-peer connectivity is wrong, and that users should prefer to block packets that have not been deemed “worthy” by the router’s hardware preferences.

“Revolution2”

Revolution2 was part of an on-going investigation into the musical possibilities of multiple laptops arranged in a ring formation. The work was a sequel to my highly choreographed composition for laptop orchestra, Revolution, in which musicians would physically move to the next desk in a clockwise fashion according to a timer, making a change to Chuck code at each desk. Rather than rearranging performers physically, Revolution2 exploits and exaggerates inherent network delays in digital transmission in a “telephone game” for laptop orchestra. Each player is responsible for launching simple SuperCollider synthesizer programmes that travel around a ring of performer/machine combinations. The sonic result is a collaborative, ambiguously spatialized performance that references yet falls apart around a strongly timed beat (Audio 1).

Live coding laptop orchestras are a performative outlet as well as a pedagogical and research tool, and are of specific importance to the present study on networked art. The practice of live coding involves writing and modifying computer programs that generate music in real time. Being able to quickly adapt and execute generative processes is a primary concern for live-coders. Live coding incorporates knowledge of composition, improvisation, musicianship and computer science, providing many dimensions for meta-directed exploration and discovery (Brown and Sorensen 2009). Revolution2 was written for the Cybernetic Orchestra, a laptop ensemble whose works commonly incorporate the sharing of sound data over a digital network to make network music (Ogborn 2012). Based out of McMaster University, the ensemble borrows from popular laptop orchestra practices such as the use of coding languages and individual, localized loudspeakers (Trueman et al. 2006).

Packet Delay, Abstract Mappings and Delocalised Audio

Digital network transmissions necessarily experience a propagation delay due to the fact that electrical signals take time to travel in a physical medium. Additionally, there are protocological constraints which introduce encoding and decoding of transmissions introducing queuing delay (Hill Associates 2008), transmission delay (Grainger, 2012) and processing delay (Ramaswamy, Weng and Tilman 2004) which make up a larger, summed delay. While the Cybernetic Orchestra commonly attempts to hide network delays to present strongly timed music, Revolution2 intentionally disregards strong timing to betray live audiences’ expectation for coincident sonic events.

Revolution2 was intended to be scaled to fit diverse musical contexts, including improvised or predetermined performance constraints. This means it was designed to be playable by inexperienced novices and live coding virtuosos alike, on as many laptops as an ensemble preferred. Each musicians’ role in Revolution2 is to manipulate few, clearly marked abstract parameters that combine to form a complex whole work. This design is intended to foster a participatory culture around the piece with low barriers to participation and a constructive creative environment (Jenkins et al. 2006). Instead of requiring a great deal of knowledge in sound theory to understand, for example, the difference in “brightness” between a 1000 Hz lowpass-filtered sawtooth wave and one filtered at 10,000 Hz, I have coded (through abstraction) a “brightness” variable that occupies a normalized range from 0 (not bright) to 1 (full bright). Similarly, “atk” and “rel” variables map a 0–1 range of player input onto an envelope shape.

This allows simple, meaningfully described input instructions on the user side to be translated into physical qualities of a sound on the system side (Hunt and Wanderley 2002). Complex synthesizer code may, through this abstracting process, be made more accessible.

The scaling of Revolution2 also concerns spatiality. The demonstration recording included in this article was completed with three laptops but Revolution2 can include as many performers as the network and ensemble can handle. New spatial characteristics of the sounds emerge as more players are added to the ring. Keeping in mind that each member of a laptop orchestra has their loudspeaker physically close to their laptop and each speaker plays every musician’s note events, the work suggests to audiences that music made with digital networks may deliberately obscure a sound’s origins. Decentralized sound sources can be a powerful musical and research tool, being investigated by contemporary ensembles like PowerBooks_UnPlugged (PB_UP), who intentionally fracture the human association between the location of a sound source and its creator (Rohrhuber et al. 2007). Following in the tradition of PB_UP, I would like to experiment with novel configurations of musicians for a Revolution2 performance (for example, abandoning the condensed “stage” set-up for one of machines and sound sources dispersed among the audience to exaggerate the sonic delocalisation).

“DACADC”

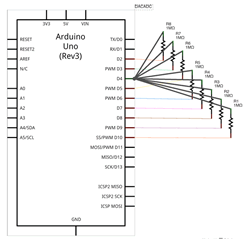

DACADC is an interactive installation that provides a means for direct human intervention into digital transmission. Structurally, the work consists of a laptop connected to a microcontroller concealed within a white box with one translucent face. Eight data wires connect the microcontroller with itself in a “personal area network” protruding from and retreating into the translucent face. Participants are invited to touch the wires, and in doing so, they physically intervene in the digital communication process. At some level of reduction, digital networks are made up of electronic signals and so are not outside the range of physical human intervention, hence the work’s expanded title: Digital-to-Analog-Converter-Analog-to-Digital-Converter.

The Arduino microcontroller, in conjunction with a Capacitive Sensor Library extension created by Paul Badger (2008), facilitates a simple capacitive sensor that generates values according to how long it takes for bit transfer to occur (change of a digital pin from HIGH [+5V] to LOW [0V] state). The transfer is initiated and terminated inside of the Arduino microcontroller, programmed and monitored by a laptop (Fig. 8). When a human touches the wires, the digital transfer takes a longer time to complete because human capacitance is added into the circuit and it takes more electrons, thus more time, to complete the electrical state change. DACADC purposes this delayed time value to trigger musical changes in layered synthesizer sequences. By concealing the microcontroller from participants while also displaying the computer code running, the work may inspire in participants a feeling of familiarity — they can see the USB cord in the computer and may deduce that the wire simply splits into eight parts — and closeness — participants literally touch the wires — while at the same time maintaining conceptual distance from these mundane encounters by linking them with novel sonic events. The ultimately analogue nature of digital technologies is rarely brought to our attention as computer users. Consumer technologies tend to hide the communication medium by wrapping them in shielded cables, and some argue that this disconnect can be eliminated by hacking (Ramocki 2008).

DACADC is informed by the field of algorithmic music composition, as are Shared Memory-Paired Action and Revolution2. Algorithmic composition simply means applying a well defined set of instructions and operations to one’s process of composing music, and can be applied in various ways. Algorithmic models have been used in Western music composition for more than one thousand years. Among the first known examples, described by Hucbald of St. Amande around 895, is the transpositional method for improvising a second voice to a given Gregorian chant (Essl 2007). A more modern example of the technique is Schoenberg’s dodecaphonic technique, a composition method to suggest pitch relations algorithmically while maintaining significant control over most of the music’s other parameters (Edwards 2011; Schoenberg 1975). By the middle of the twentieth century, composers like Iannis Xenakis were extending and adapting techniques like this into stochastic music, incorporating chance operations.

The introduction of computers in the 1960s freed pioneering algorithmic composers to focus on top-down directed creation and artistic exploration by quickly executing tasks associated with a transcribed algorithmic process (Essl 2007). Instead of considering how they will create music, the composer asks: what algorithm will I design to compose music? This shifts the emphasis from the artist’s product to the process they used to create it. Michael Edwards agrees, stating that formalization (algorithmically) of the compositional process allows the composer to discover musical ideas they may or may not be looking for rather than attempting to consciously realize them (Edwards 2011).

DACADC adopts an algorithmic approach to create an interactive musical composition that was inspired by phasing techniques developed by American electroacoustic tape and minimalist composers in the mid-1960s (Mertens 1983, Holmes 2002). When a participant intervenes on the wire sculpture, each point of contact instigates a melodic, temporal or timbral change in one of four sequenced synthesizers, which sound on dedicated single speakers in each corner of a square space. In an isolated state without interaction, each speaker is playing a melody as one monophonic voice diffused on four sound sources. Four wires are dedicated to delaying one iteration of the melody according to the period of contact. The remaining four wires trigger a timbral change depending on if the wire is touched or not. Human contact with any of the eight wires will instigate a reordering of the set of pitches that make up the melody. DACADC offers a unique and ephemeral experience each time it is encountered.

Conclusion

The present study has accounted for the research-creation of works of three sizes: Metropolitan Area Network, Local Area Network and Personal Area Network. The role of “black boxing” in these three works is worthy of further consideration. Such abstraction can facilitate interactive and low-entry fee systems by mapping simple participant input onto multi-parameter outputs facilitating immersive audiovisual feedback in Shared Memory-Paired Action. In Revolution2, few clearly marked and intuitive controls offer an opportunity for novice and expert musical expression. In DACADC, an intentionally white, soft “black box” offers participants an ephemeral, interactive experience while maintaining a high level of system transparency. In future work, I intend to explore this soft black box idea further, emphasizing “transparent boxing”, including both physical and transparent boxes for electronics and transparent abstraction within code, informed by the live coding tradition of visually representing performer code for the audience to see.

Bibliography

Ascott, Roy. “Is There Love in the Telematic Embrace?” Art Journal 49/3 (Fall 1990) pp. 241–247.

Badger, Paul. “Capacitive Sensing Library.” Arduino Playground. 2008. Available online at http://playground.arduino.cc/Main/CapacitiveSensor?from=Main.CapSense [Last accessed 9 April 2015]

Benayoun, Maurice. The Tunnel Under the Atlantic. Maurice Benayoun’s website. December 1995. Available online at http://benayoun.com/projet.php?id=14 [Last accessed 9 April 2015]

Blow, Jonathan. “Games as Instruments for Observing Our Universe.” Lecture conducted from Champlain College in Burlington VT, USA. http://braid-game.com/news/2010/02/a-new-short-speech-about-game-design

Brown, Andrew R. and Andrew Sorensen. “Interacting with Generative Music through Live Coding.” Contemporary Music Review 28/1 (February 2009) “Generative Music,” pp. 17–29.

Cáceres, Juan-Pablo and Alain B Renaud. “Playing the Network: The use of time delays as musical devices.” ICMC 2008. Proceedings of the International Computer Music Conference (Belfast: SARC — Sonic Arts Research Centre, Queen’s University Belfast, 24–29 August 2008), pp. 244–250. Available online at https://ccrma.stanford.edu/groups/soundwire/publications/papers/caceres_renaud-ICMC2008.pdf [Last accessed 9 April 2015]

Chafe, Chris, Michael Gurevich, Grace Leslie and Sean Tyan. “Effect of Time Delay on Ensemble Accuracy.” ISMA 2004. Proceedings of the International Symposium on Musical Acoustics (Nara, Japan, 31 March – 3 April 2004). https://ccrma.stanford.edu/~cc/soundwire/etdea.pdf

Chapman, Owen B. and Kim Sawchuk. “Research-Creation: Intervention, analysis and ‘family resemblances’.” Canadian Journal of Communications 37/1 (2012) “Media Arts Revisited (MARs),” pp. 5–26.

Chew, Elaine, Alexander A. Sawchuk, Roger Zimmermann, Chris Kyriakakis, Christos Papadopoulos, Alexandre Francois and Anja Volk. “Distributed Immersive Performance.” Presented at the panel on the Internet for Ensemble Performance? National Association of the Schools of Annual Meeting (San Diego CA: University of Southern California, 2004).

Chun, Wendy H.K. Programmed Visions: Software and memory. Cambridge MA: MIT Press, 2011.

Coleman, E. Gabriella. Coding Freedom: The ethics and æsthetics of hacking. Princeton NJ: Princeton University Press, 2013.

Collins, Nick, Alex McLean, Julian Rohrhuber and Adrian Ward. “Live Coding in Laptop Performance.” Organised Sound 8/3 (December 2003), pp. 321–330.

Delanhunt, Michael. “Installation or Installation Art.” ArtLex Art Dictionary. 2010. http://www.artlex.com

Edwards, Michael. “Algorithmic Composition: Computational thinking in music.” Communications of the ACM 54/7 (July 2011) pp. 58–67.

Emmerson, Simon. “Pulse, Metre, Rhythm in Electroacoustic Music.” EMS 2008 — Musique concrète — 60 Years Later / Musique concrète, 60 ans plus tard. Proceedings of the Electroacoustic Music Studies Network Conference (Paris: INA-GRM and Université Paris-Sorbonne, 3–7 June 2008). http://www.ems-network.org/ems08

Essl, Karlheinz. “Algorithmic Composition.” In Cambridge Companion to Electronic Music. Edited by Nick Collins and Julio d’Escrivan. Cambridge: Cambridge University Press, 2007, pp.107–125.

Franke, Wojciech. “On the Possibility of Community in Contemporary Technoculture.” Unpublished master’s thesis. Goldsmiths College, University of London, 2010. Available online at http://enajski.pl/Wojciech Franke — On The Possibility Of Community In Contemporary Technoculture (2010).pdf [Last accessed 9 April 2015]

Galloway, Alexander R. and Eugene Thacker. The Exploit: A Theory of networks. Electronic Mediations, Vol. 21. Minneapolis MN: University of Minnesota Press, 2007.

Gardner, Martin. “Mathematical Games: The Fantastic combinations of John Conway’s new solitaire game ‘Life’.” Scientific American 223 (Fall 1970), pp. 120–23.

Guérin, François. “Electroacoustic Music.” The Canadian Encyclopedia. Historica Foundation, 2006 (last rev. 2014). Available online at http://www.thecanadianencyclopedia.ca/en/article/electroacoustic-music-emc [Last accessed 9 April 2015]

Grainger, David. “Transmission versus Propagation Delay.” 2012. http://media.pearsoncmg.com/aw/aw_kurose_network_2/applets/transmission/delay.html

Hill Associates. “Queing Delay.” Hill Associates Wiki. http://www.hill2dot0.com/wiki/index.php?title=Queuing_delay [Last Accessed August 25 2014]

Holmes, Thomas B. Electronic and Experimental Music: Pioneers in technology and composition. Second Edition. London: Routledge Music/Songbooks, 2002.

Hunt, Andy and Marcelo M. Wanderley. “Mapping Performer Parameters to Synthesis Engines.” Organised Sound 7/2 (August 2002) “Mapping in Computer Music,” pp. 97–108.

Jenkins, Henry, Ravi Purushotma, Katharine Clinton, Margaret Weigel and Alice J. Robinson. Confronting the Challenges of Participatory Culture: Media education for the 21st century. Digital Media and Learning Initiative. Chicago IL: The John D. and Catherine T. MacArthur Foundation, 2006. http://www.newmedialiteracies.org/wp-content/uploads/pdfs/NMLWhitePaper.pdf

Kac, Eduardo and Ed Bennett. Ornitorrinco Project. Telepresence Art. http://www.ekac.org/ornitorrincom.html

Keane, David. Electroacoustic Music in Canada: 1950–1984. eContact! 3.4 — Histoires de l’électroacoustique / Histories of Electroacoustics (July 2000). Available online at https://cec.sonus.ca/econtact/Histories/EaMusicCanada.htm [Last accessed 9 April 2015]

Kittler, Friedrich. “There Is No Software.” CTheory. 18 October 1995. http://www.ctheory.net/articles.aspx?id=74

Knowbotic Research. “Dialogue with the Knowbotic South.” Medien Kunst Netz / Media Art Net. 1994. http://www.medienkunstnetz.de/works/dialogue-with-the-knowbotic-south

Kock, Ned. “Action Research: Its nature and relationship to human-computer interaction.” The Encyclopedia of Human-Computer Interaction. Edited by Mads Soegaard and Rikke Friis Dam. 2nd Ed. Aarhus, Denmark: The Interaction Design Foundation, 2014. Available online at https://www.interaction-design.org/encyclopedia/action_research.html [Last accessed 9 April 2015]

McLean, Alex. forkbomb.pl [Software]. runme.org. http://runme.org/project/+forkbomb/

McLean, Alex and Geraint Wiggins. “Texture: Visual Notation for Live Coding of Pattern.” ICMC 2011: “innovation : interaction : imagination”. Proceedings of the 2011 International Computer Music Conference (Huddersfield, UK: CeReNeM — Centre for Research in New Music at the University of Huddersfield, 31 July – 5 August 2011), pp. 621–628

Mercier, Lina. “Music, Arts, and Technology. A Critical Approach (review).” Trans. Daniel Sellal. Computer Music Journal 25/4 (Winter 2001) “Sound in Space,” pp. 91–92.

Mertens, Wim. American Minimal Music: La Monte Young, Terry Riley, Steve Reich, Philip Glass. Trans. J. Hautekiet. London: Kahn & Averill; New York: Alexander Broude, 1983.

Morreale, Patricia A. and G.M. Campbell. “Metropolitan-Area Networks.” Spectrum, IEEE 27/5 (May 1990), pp. 40–42.

Ogborn, David. “Composing for a Networked, Pulse-Based, Laptop Orchestra.” Organised Sound 17/1 (April 2012) “Networked Electroacoustic Music,” pp. 56–61.

Ramaswamy, Ramaswamy, Ning Weng and Wolf Tilman. “Characterizing Network Processing Delay.” GLOBECOM’04. Global Telecommunications Conference 2004 (29 November – 3 December 2004). IEEE, vol. 3, IEEE 2004, pp. 1629–1634.

Ramocki, Marcin. “DIY: The Militant Embrace of Technology.” In Transdisciplinary Digital Art: Sound, vision and the new screen. Edited by Randy Adams, Steve Gibson and Stefan Müller Arisona. Berlin/Heidelberg, Germany: Springer-Verlag, 2008.

Rohrhuber, Julian, Alberto de Campo, Renate Wieser, Jan-Kees van Kampen, Echo Ho and Hannes Hölzl. Purloined Letters and Distributed Persons. Presented at the 2007 Music in the Global Village Conference (Budapest, 6–8 September 2007).

Schoenberg, Arnold. Style and Idea: Selected writings of Arnold Schoenberg. Oakland CA: University of California Press, 1975.

Social Planning and Research Council of Hamilton. “Beasley Neighbourhood Profile.” Beasley Neighbourhood Action Plan. November 2011. Available online at http://www.hamilton.ca/NR/rdonlyres/D45802AF-B778-4FE3-9D72-23F93E488238/0/Beasley_Neighbourhood_Plan_final.pdf [Last accessed 9 April 2015]

_____. “Neighbourhood Profile.” Jamesville Neighbourhood Action Plan. March 2012. Available online at http://www.hamilton.ca/NR/rdonlyres/CC12AED7-EDCF-4F1A-A93E-91AA29C91097/0/JamesvilleActionPlanBooklet_WEB.pdf [Last accessed 9 April 2015]

Synthhead. “glitchDS — Cellular Automaton Sequencer for the Nintendo DS.” Synthopia. 29 May 2008. Available online at http://www.synthtopia.com/content/2008/05/29/glitchds-cellular-automaton-sequencer-for-the-nintendo-ds [Last accessed 9 April 2015]

Trueman, Dan. “Why a Laptop Orchestra?” Organised Sound 12/2 (July 2007) “Language,” pp. 171–179.

Trueman, Dan, Perry R. Cook, Scott Smallwood and Ge Wang. PLOrk: The Princeton Laptop Orchestra, Year 1. ICMC 2006. Proceedings of the International Computer Music Conference (New Orleans, USA: 2006). Available online at http://soundlab.cs.princeton.edu/publications/plork_icmc2006.pdf [Last accessed 9 April 2015]

Wang, Ge and Perry R. Cook. ChucK: A concurrent, on-the-fly audio programming language. ICMC 2003. Proceedings of the International Computer Music Conference (Singapore: National University of Singapore, 29 September – 4 October 2003), pp. 219–226.

Wessel, David and Matthew Wright. “Problems and Prospects for Intimate Musical Control of Computers.” Computer Music Journal 26/3 (Fall 2002) “New Music Interfaces,” pp. 11–22.

Social top