CrossTalk

A Reflection on the development of an interactive performance

As a computer musician, I am intrigued by existing and emergent relationships between performers, audiences and music technology. I create systems that enable performers — and in the case of CrossTalk, audiences — to enact control over audio signal-processing parameters and triggering of events in real time.

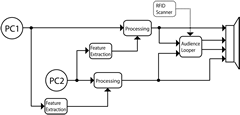

A performance of CrossTalk involves two vocalists on stage, each with their own microphone. Additionally, computer software processes their voices and a sensor allows members of the audience to interact with the performance. There is no fixed media, which means that there is no pre-recorded audio used in the work. As an extension of using feature extraction to control actions and processing, CrossTalk’s Pure Data patch maps data procured from one singer to control the other singers’ audio processing, and therefore each singer is at once both a performer and a controller of the other’s signal. The audience experiences and interacts with the singers through the use of individual radio frequency identification tags (RFID), mapped to audio looping.

Thus CrossTalk is a live performance piece about control. It explores the ways in which control affects relationships between performers, performers and their audience, and of the audience members amongst themselves. In this article, I will detail the conception of this interactive musical performance, and will reflect on how it developed over the course of three performances.

Development

Audience Interaction

CrossTalk dates back to October 2012, when I was offered an opportunity to develop a performance for the Festival of Original Theatre (FOOT) at the Centre for Drama, Theatre and Performance Studies of the University of Toronto, to be presented on February 1, 2013. At CrossTalk’s inception, I wanted to develop a system that would give singers some indication of the audience’s experience and response to their performance in real time. I decided this could be achieved through the use of a sensor to trigger the sampling of one second of the processed audio whenever a new audience member entered the space, and loop it. That loop — their first impression — ceased only once that audience member left the space.

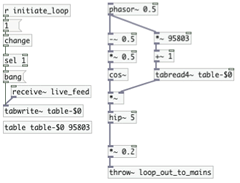

I explored several possible means of automating this interactivity, from motion detection to capacitive proximity detection, and eventually settled on using radio frequency identification (RFID) tags and an RFID scanner. A critical element was that every RFID tag had a unique identifier. One tag could be distributed to an audience member, and could therefore serve to individualize their interactivity in the performance. I opted for the Parallax Serial RFID Card Reader, as it was compatible with inexpensive 125 kHz passive RFID tags. I connected it to an Arduino UNO microcontroller for interfacing with my laptop and modified a PRFID Arduino code (Djmatic 2013) to stream the tag data to Pure Data. When an RFID tag is scanned into Pure Data, it triggers the dynamic generation of a new loop patch that is linked to the ID of that tag (Fig. 1).

I had intended to embed an RFID tag within the lanyard of each FOOT attendee. Concealing the tag would have meant that the audience would not have been aware of the agency which CrossTalk offered to them. I wanted to position the scanner at the entrance to the performance space. However, the only RFID hardware I could afford had a limited range of up to three centimetres. This meant that a noticeable scanning action would need to take place as each person walked through the entrance. Rather than veil the action with some other gesture, which might invoke other meanings, I decided to make the RFID scanning explicit, engaging each audience member as they entered the space. I posited that fluctuations in the size of the audience would have an impact on everyone’s experience, and that depending on individual tastes and sensitivities that some audience members would find the increasingly entropic output too disturbing and would leave, thus ending their loops. Some audience members would leave after finding redundancy in hearing too few loops, while others who appreciated this would enter and stay. This was their agency: participation in the continuous self-tuning of the audience.

The Performer-Controller

During a lecture at Concordia University, Dr. Eldad Tsabary asked “What are the implications for creativity when someone else is in control of your sound?” (Tsabary 2013) This provocative question strikes at the core of what CrossTalk is all about. I would take the question even further and ask: how does the performance change in this situation, and what constraints and affordances are placed upon the singer’s creative and technical ability?

A CrossTalk singer is asked to adopt a frame of mind that I call the performer-controller. It is a dual role where the performer contributes the audio source material — the harmonic, melodic, rhythmic, perhaps the semantic — to be manipulated, and the controller manipulates this source material. (Fig. 2)

At each rehearsal, I ask the performer-controllers to consider whether it is possible to truly be in this dual role mindset, or inhabit only one role at a time — alternating between being a performer and controller. Throughout the development of the piece, I wondered which facets could be considered instruments. I now like to think of the performer-controllers as instruments playing each other. Each individual involved in CrossTalk — performer-controller and audience member alike — truly changes the instrument.

Engineering Intent

CrossTalk has been performed three times, and each performance quite deliberately involved very different vocalists. I am concerned with the use of an instrument beyond the situation for which it was originally developed; an instrument designer cannot entirely anticipate, nor control, how their product will be used. I refer to this as “engineering intent”. Consider the following analogy — a context involving two rooms and one guitar. I tell a group of people gathered in one of these rooms that I have placed an object in the adjacent room and I ask each of them to enter the room one at a time and interact with that object. If the first person who steps into the room is a professional guitarist and recognizes that the object is an acoustic guitar, they might pick it up and play a song. If the next person to enter the room is not a guitarist but knows what a guitar is, they might pick it up and pluck a simple melody on one string. The next person to enter the room, having never seen an object like this before, picks it up, shakes it and then drops it to the floor where it breaks into pieces. The fourth person who enters is a carpenter who picks up the splintered shards of wood and wire and tries to make sense of it. All of these interactions produce sound, yet some sounds were not likely the intention of the guitar’s luthier — though I am delighted by the notion of a luthier who specializes in making guitars that smash beautifully. I am not interested in dictating all of the ways in which my instruments may be used. I am fascinated with how performers discover, bend and break the constraints of an instrument. It is my opinion that that the constraints of an instrument are what gives it its extrinsic character; and how a musician overcomes these limitations is what helps us identify the intricacies of our favourite musicians. This is particularly valid in circumstances such as CrossTalk where performer-controllers are exploring and learning how to use an instrument whose affordances and constraints are not entirely defined. Of course it is not just the performer-controllers who are faced with this challenge, as there is also the matter of how I continue to develop CrossTalk once it is being workshopped and performed.

Performances

Festival of Original Theatre

A persistent curiosity of mine lies in teaching performers how to use a new instrument while its affordances and constraints are still being defined. CrossTalk began as an entirely improvised performance, which meant that while learning how to use the Pure Data patch, the performer-controllers for the Festival of Original Theatre performance 1[1. The performer-controllers of CrossTalk at the Festival of Original Theatre were Devin Fox, Carlo Meriano and Dwight Schenk.] also had to focus on generating content, and this proved to be too much to focus on at one time. During an early rehearsal, I happened to have a book of poetry with me and suggested that the performer-controllers use it as a script in order to focus their attention on controlling the patch. I was pleased with the result, not only as a teaching device to help the performer-controllers, but also in terms of the creative potential for developing the piece, and so I invited Toronto writer Jonathan Meriano to develop a script for the performance.

Rather than attempt to convey specific meaning through the performance, CrossTalk invites its performers and audience to find meaning for themselves, yet as a writer Meriano is concerned that the reader understand the meaning that he puts into his words. So CrossTalk asks not only its performer-controllers and audience to interpret their own meaning, but also asked this of him as the scriptwriter. Through his experience working with me on CrossTalk, Meriano’s interest began to form around trying to send an image through his text, interpreted by the performer-controller, then through the digital signal processing, to see if the audience received the same imagery, or anything at all. Further, as I viewed the performer-controllers of CrossTalk as instruments playing each other, Jonathan began to “view CrossTalk’s technology as a character [in his writing]. It was almost as if there was another person in the room” (Meriano 2013).

The performer-controllers initially objected to the audience-generated loops, stating that at the very least they wanted to have control over stopping the loops, lest their live performance be overtaken by a highly entropic audience loop. That this was an issue for them led me to realize that I had failed to adequately explain my intent, ideas and questions behind the entire piece and interactivity, and had also underestimated what I was asking of the performer-controllers. The performer-controllers’ anxiety with giving control to the audience, their absolute terror that an audience could effect their musical performance, that audience loops could augment the social performance and interfere with their own performance, gave rise to fascinating new dimensions in the evolution of CrossTalk.

I liked the prospect of sonifying the duration of each person existing physically within the performance hall. During the performance something unexpected took place: with the curious white circular RFID chip in hand, a placebo effect emerged where many audience members took it upon themselves to move around the space to see what effect the chip’s location would have on the performance, which of course, was none. By no means, however, was this an invalid interaction! “[T]he performance was emergent. It became how the audience existed in the space together” (Reinhart 2013). There was one glaring oversight that I realized once the performance was underway. The entrance to the performance space connected to a short hallway, and this was where the RFID scanner was set up. As a result the audience-initiated audio loops were captured before that member made it to the space. By the time their corporeal self entered the space, they had already been represented sonically for roughly ten seconds. That they were not present in the space for their own point of articulation meant that they could not identify their own sonic loop amidst other loops and live audio. After this performance I also realized that when using an RFID system there was no way to accurately judge why someone was leaving the performance — it wasn’t possible to determine whether the movement of the audience in and out of the space was due to their discomfort, interest, disinterest or indeed for emergency reasons. In subsequent performances I became much less focussed on why an audience member would enter or leave the performance space.

Data:Salon

In preparation for the next performance held at Eastern Bloc’s Data:Salon, I developed a trainer patch that allowed the performer-controllers 2[2. The performer-controllers of CrossTalk at Data:Salon were Joseph Browne and Sima Shamsi.] to rehearse alongside a software-based performer-controller that could play back audio files and recordings of their own voices. The Data:Salon iteration of CrossTalk would take the form of a lecture-recital, which meant that the full extent of the audience would already be within the performance space by the time the recital began. I therefore opted to ask the audience members to openly interact with the RFID tags and sensor. While the tags did not have anything to do with sonification of the quantity of audience members, they were still a valid way to engage an audience on an individual basis and as a group.

Toronto Electroacoustic Symposium 2013

The first rehearsal for this performance began with an open discussion with the performer-controllers 3[3. The performer-controllers of CrossTalk at the 2013 Toronto Electroacoustic Symposium were Elena Marie Stoodley and Eric Biard-Goble.] on the system, my ideas, and what would be expected of them, how the patch may be further refined to suit their abilities and interests and how to compose for them specifically.

I introduced a control-threshold parameter to the patch so that the audio processing would not engage unless a controller sang above a certain level. This extended the controller’s range of expression and provided contrasts between the unprocessed and processed performers’ voices.

The presentation at the symposium also took the format of lecture-recital. As with the Data:Salon performance, the space at the symposium did not have an entry or exit point to orient the audience and signal the beginning or end of their agency.

Before the performance commenced, I encouraged the audience to play the instrument, and clarified that I considered any action on their part as participatory. It was up to the audience how they chose to do that. The reason for providing this information to the audience beforehand was that an important function of the piece was the augmentation of the social performance, and in the context of performance it was a way to give agency to the audience in order to become controllers themselves. For those who wanted to activate their loop, it would mean leaving their seat and disrupting those who remained seated as they exited their row in order to reach the scanner at the front of the room. I acknowledged that some would consider such behaviour rude or disruptive, and that might be enough to inhibit their interaction. However, remaining seated could afford a better opportunity to listen and notice the changes in the performance relative to their position in the room. As had been the case with both earlier performances of CrossTalk, there would always be a chance that some audience members would, with a mischievous grin, palm several chips. As with the “two rooms, one guitar” analogy, I consider all such types of behaviour as valid interactions in the piece.

Role of the Composer

Throughout the entire run of CrossTalk, I had not acknowledged myself as an active agent in the performance. In making the action of scanning the RFID tags explicit to the audience, and in coordinating this action myself, I was directly engaging with the audience members, yet I failed to address this in lectures and did not consider what influence this would have on the behaviour of the performer-controllers and audience members. Following the lecture-recital at the Toronto Electroacoustic Symposium, some attendees remarked that my actions in the performance had ritualistic connotations (Vidiksis 2013), while others posited that the distribution and collection of RFID tags from the audience by a single person at the front of the stage was reminiscent of the Eucharist (Naylor 2013). Although I am familiar with this ritual in Christianity, as I had identified as a Catholic for an earlier period of my life, I had not intended to represent the sacrament of Holy Communion in CrossTalk. How appropriate then to discover that I cannot engineer the intent of the audience interaction, just as an instrument designer cannot engineer the intent of the person that plays their instrument.

Conclusion

When performing, I am often anxious about how an audience will perceive my performance. At the outset, I envisioned CrossTalk as a system capable of revealing to its performer-controllers how the audience experienced the performance in real time, enabling a dynamic feedback loop between both parties. However, with each new rendition of the piece, my interest and intent gradually shifted more towards the behaviour of performer-controllers and audience members as individuals and as groups.

The capacity for a system to not only amplify the complexity of social interaction in the reception of an artistic work, but also to make use of it, is something that I plan to explore further in future works.

Bibliography

Djmatic. “Code for Using the Arduino with the Parallax RFID Reader.” The Arduino Playground. http://playground.arduino.cc/Learning/PRFID [Last accessed 24 January 2013]

Meriano, Jonathan. Private correspondence. 8 November 2013.

Naylor, Steven. Private correspondence. 18–19 August 2013.

Reinhart, Michael. Private correspondence. 30 July 2013.

Tsabary, Eldad. “Live EA with CLOrk Laptop Orchestra.” Lecture in Montréal, 2013.

Vidiksis, Adam. Private correspondence. 18 August 2013.

Social top